ChatGPT 5.3 Cut Its Citations by 20% and Google Rank Picks the Survivors

OpenAI shipped GPT-5.3 Instant to all ChatGPT users on March 3 and did not issue a press release about what it would do to publishers. The change was quiet: fewer outbound links per response, more internal reasoning, and a citation filter that looks suspiciously like Google's own ranking system.

The numbers arrived a few weeks later. Resoneo, a French SEO consultancy, tracked 400 daily prompts across 14 weeks using their Meteoria platform. Across 27,000 comparable responses, the average number of unique domains cited per response dropped from 19 to 15. Roughly 20% fewer sites sharing the same answer surface. The number of unique URLs fell from 24 to 19 in the same period.

On its own, a 20% citation drop is concerning. What makes it genuinely interesting is what happened alongside it: ChatGPT started searching harder. The model now fires 10 or more sub-queries per prompt, up from 2-3 previously. It's casting a wider net and keeping a smaller catch.

Being found is no longer the same as being cited

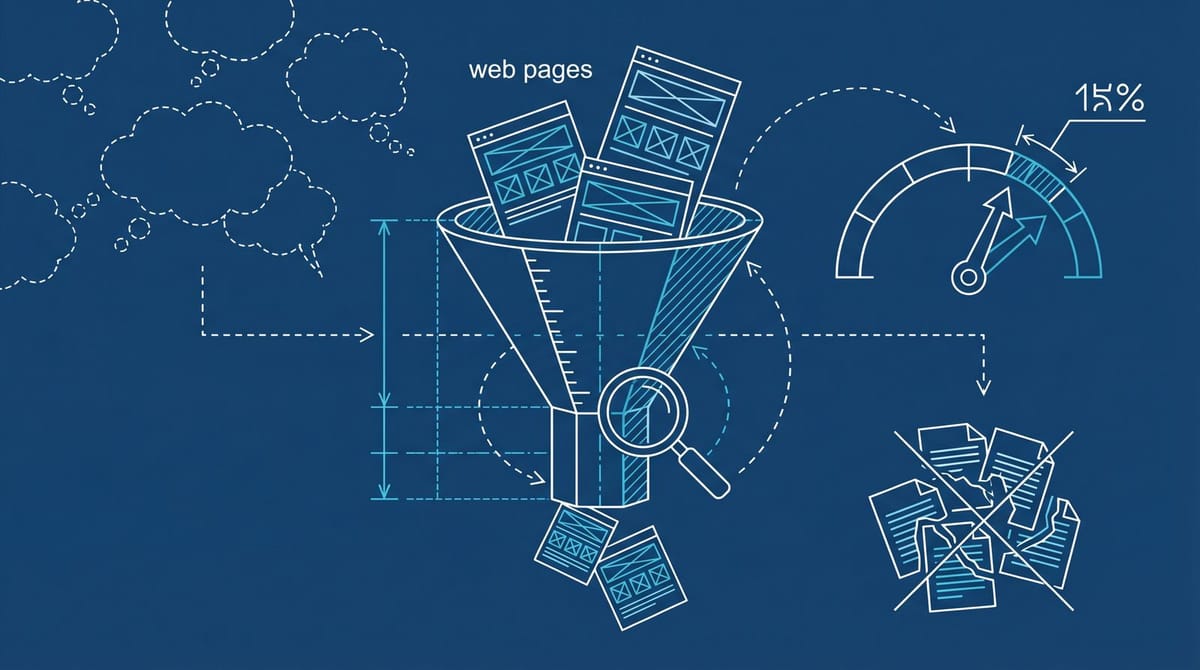

A separate study by AirOps analyzed 548,534 pages retrieved across 15,000 prompts. Only 15% of those pages appeared in the final answer. The rest were read, evaluated, and discarded during synthesis.

That 85% discard rate is the number that should change how you think about AI visibility. Getting your page into ChatGPT's retrieval set is step one. Surviving the filter is a completely different problem.

And the filter has a pattern. According to the AirOps data, 55.8% of cited pages ranked in Google's top 20 for related queries. Pages sitting at position 1 were cited 3.5 times more often than pages outside the top 20. Sites with over 32,000 referring domains were 3.5 times more likely to earn a citation than sites with fewer than 200.

If that sounds like Google's ranking system with extra steps, it sort of is. We covered this connection earlier when 87% of ChatGPT citations turned out to come from Bing-indexed pages, which overlap heavily with Google's top results. The 5.3 update seems to have tightened that relationship further.

Fan-out queries changed the game (and most SEO tools can't see them)

The fan-out behavior is the part that gets overlooked. When ChatGPT receives your prompt, it generates follow-up queries internally. 89.6% of prompts triggered two or more of these sub-searches, and collectively they generated over 43,000 queries from just 15,000 original prompts.

And it gets odd. 32.9% of cited pages appeared only in fan-out results, not in the initial search. And 95% of those fan-out queries had zero traditional search volume. They're invisible to every keyword research tool on the market.

So your page might get cited for a query that nobody has ever typed into Google. The model is literally inventing search queries to verify its own reasoning, and your content either survives that process or it doesn't. There's no way to optimize for queries you can't see.

What you can do is optimize for the signals the model appears to weight heavily: topical authority, factual density, and (this is the uncomfortable part) your existing Google ranking.

The authority signal ChatGPT is checking for itself

One of the more surprising findings from the PPC Land analysis: ChatGPT 5.3 now uses the "site:" operator to look up specific brands. It runs unprompted searches for accreditation pages, awards, and aggregator profiles. The model is effectively doing its own background check on sources before deciding whether to cite them.

This changes the optimization playbook in a way that feels a little backwards. For years, the SEO community has debated whether Google uses brand signals as a ranking factor. Google says no. But ChatGPT isn't Google, and it appears to be explicitly checking whether a source is a real, established entity before putting its name in an answer.

From what I've seen across the data, the brands that show up on G2, Clutch, industry awards pages, and Wikipedia seem to have an edge in the citation filter. That's not the same as saying "go win awards to rank in ChatGPT." But it does suggest that the gap between AI visibility and brand visibility is getting smaller, not larger.

What this means if you're already running a GEO strategy

If you've been building a generative engine optimization strategy, the 5.3 update complicates things. The platforms don't agree on citation sources. Only 11% of cited domains overlap between ChatGPT, Google AI Overviews, and Perplexity. Optimizing for one platform's citation behavior might not transfer.

The 5.3 change makes ChatGPT's filter more selective, which concentrates citation value among fewer sources. Fewer domains in each response means the ones that do get cited take up a larger share of the user's attention. Good news if you're already getting cited. Rough news if you're on the bubble.

I think the practical implication is that splitting your effort across ChatGPT, Perplexity, and Google AI Overviews equally is probably the wrong move for most teams right now. If your site already ranks in Google's top 20 for your target queries, you're probably better off doubling down on topical authority and factual density for ChatGPT specifically. If you don't rank in the top 20, honestly, fixing your Google ranking will probably do more for your AI visibility than any GEO-specific tactic.

The conversion data that complicates the panic

The conversion numbers are worth mentioning even though they muddy the picture. According to data tracked through the model transition, AI traffic converts at 1.66% compared to 0.15% from traditional search. That's an 11x difference. But the total volume is still tiny for most sites, and ChatGPT referral traffic has dropped 52% since July 2025.

So you're fighting for a smaller share of a shrinking click pool, but the clicks that do come through are worth dramatically more. For most teams, the right response probably isn't "pour budget into AI visibility tools." It's more like: make sure your Google fundamentals are solid, build content that can survive the citation filter, and figure out whether AI-referred traffic is actually showing up in your analytics at all. Most GA4 setups don't attribute ChatGPT traffic correctly, which means you might be doing something right and have no way to tell.

The 20-minute audit that tells you where you stand

Open Google Search Console. Pull your top 50 pages by impressions over the last 90 days. Cross-reference with your analytics to see which of those pages are getting any ChatGPT referral traffic (check under Acquisition, then Traffic acquisition, and filter for chatgpt.com or similar referrers).

If your top Google pages aren't showing up as ChatGPT referral sources, you have a content format problem, not a ranking problem. ChatGPT rewards pages that state facts clearly, cite their own sources, and don't bury the answer under 800 words of preamble. Check your highest-ranking pages and ask whether the actual answer appears in the first 200 words.

If none of your pages are getting ChatGPT referrals at all, check your robots.txt for OAI-SearchBot blocks first. Then check whether your content is in Bing's index, because that's still the primary retrieval pipeline.

The sites that treat AI visibility as a completely separate discipline from SEO are probably going to spend a lot of time and money rediscovering that the fundamentals haven't actually changed. The gatekeeper is new. The gates look familiar.