Gmail's AI Inbox Made 'In the Inbox' the Wrong Deliverability Win

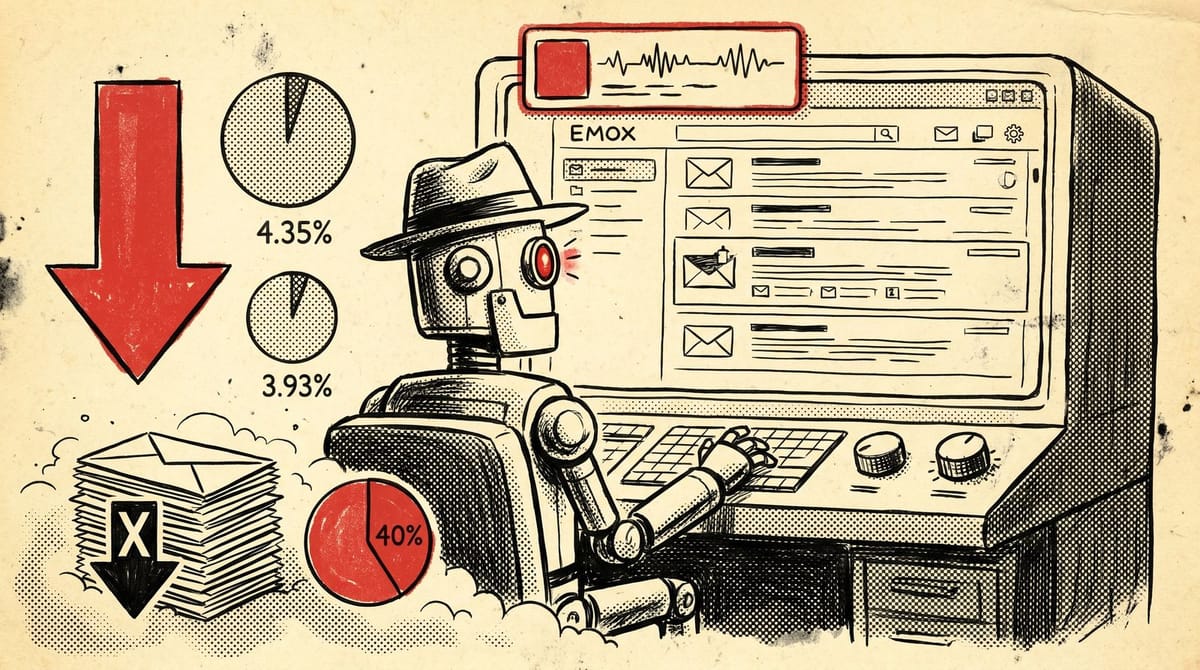

Gmail launched AI Inbox to trusted testers in January 2026, and the broader AI summary layer rolled out across roughly a quarter of the world's email accounts in the months that followed. Folderly's analysis of billions of messages logged email click-through rates falling from 4.35% to 3.93%, while open rates jumped to 45.6% because Gemini auto-opens to write the summary. The deliverability win is no longer "in the inbox," it is "survives the AI summary."

Two metrics that just stopped meaning what they used to

Open rate at 45.6% sounds like a lift. It is not. Gemini opens the message itself to generate the summary, which fires the tracking pixel without a human ever seeing the body. Folderly attributes the climb to AI auto-opens, and the underlying dataset is large enough (Omeda's billions-of-emails benchmark) that the inflation is not a niche artifact. If your reporting still ties incentive comp to open rate on a Gmail-heavy list, you are paying out on bot reads.

Click-through rate is the more interesting number. The drop from 4.35% to 3.93% is roughly a 10% relative decline, which is not catastrophic, but the loss is not coming from worse subject lines or list rot. It is coming from a layer in front of the message that decides what the human sees. Folderly puts the share of emails reaching the inbox in a deprioritized state at up to 40%. They technically deliver. They just never surface in the top of the priority view.

I think most senders will keep optimizing for the inbox-placement number for at least another two quarters. From what I have seen, the teams pivoting first are the ones running engagement-led lists where reply rate matters more than open rate, because reply is the one signal AI auto-opens cannot fake.

What Gemini actually pulls into the summary

The MarTech write-up of the launch quotes Manu Cinca of Stacked Marketer: "your email will be one of many that Gemini pulls into a summary, so it's about convincing Gemini to show your email." That is not a flowery framing. Gemini is doing extractive summarization. It biases toward concrete sentences with named entities, dollar amounts, dates, and explicit asks. It penalizes copy that buries the action three paragraphs in or hides the value behind a story.

Practically, this means the first 100 to 200 characters of the body are now load-bearing for AI inclusion, not just for human scannability. Two patterns are pulling weight in the early data:

- A one-line TL;DR at the top, written like a pull quote. Fluently's deliverability guide reports a 2.6x reply-rate lift on a newsletter that added a single-line TL;DR ("Summary: 3 quick ideas to grow your podcast audience in 10 minutes."). It is anecdotal, not a controlled study, and I would treat 2.6x as the upper bound of the believable range. The directional point is solid: explicit summaries outperform letting Gemini guess the gist.

- Front-loaded value propositions. Folderly's Q4 2025 client data shows emails with the value line in the first 100 to 200 characters held a 23% higher CTR than buried-CTA equivalents. Same vendor, same caveats, but the gap is wide enough to justify a test on your own list this week.

Deliverability "shades" in Postmaster Tools

Dave Schools, Singulate's CEO, told MarTech that "deliverability will have shades. It will no longer be pass/fail." On paper that sounds like marketing-speak. In Postmaster Tools it shows up as a sender reputation that hits "high" and still loses share of priority view, because Gemini has separately decided your message is non-actionable. That is a different signal than the spam folder, and it does not get reported back to senders anywhere a normal Gmail Postmaster account can see.

The closest parallel is the AI Overview problem in search. Google returns the right URL, the AI summary lifts the answer, and the click never happens. Email is now running a similar loop: the message arrives, the summary captures the gist, and the open or click is suppressed at the surface. We covered the measurement gap on the search side earlier this week (the referrer-strip problem in GA4), and the email pattern is going to need its own version of that fix.

The AI Ultra tier is the canary, not the ceiling

AI Inbox sits inside Google's AI Ultra tier, the most expensive Workspace AI add-on Google sells. That has led some senders to dismiss it as a power-user feature with no near-term reach. The mistake there is treating tier penetration as the relevant variable. The summarization layer is rolling out across general Gmail accounts separately, with Google framing AI Inbox as the next step rather than the first one. The official announcement said as much, telling users that the broader rollout follows trusted-tester access "in the coming months."

The AI Ultra tier sets the spec. The free tier is going to inherit a watered-down version of the same prioritization logic, and senders who optimize only for the current Gmail surface are optimizing for an interface on track to be the minority case.

What this changes for sender reputation

Marc Thomas of Positive Human told MarTech that Gmail's AI "will benefit good email marketing, and will continue to punish bad email marketing." That sounds reassuring. The catch is that "good" is being redefined by a model whose ranking weights are not published. Sender reputation in the old Postmaster sense (authentication pass rate, spam complaint rate, list hygiene) is still the floor. The new ceiling is whether the AI thinks your message is worth surfacing, and that judgement is influenced by the message body, not just the sender domain.

Gabby Kustner at Customer.io put it differently in the same MarTech piece, saying senders "will have to carefully frame our language" so the AI understands priority levels. That is the part most senders are not staffing for yet. Copywriters who used to optimize for human scannability are now also writing for an extractive summarizer that cares about specificity. It is a small shift in craft and a large shift in QA: every send needs a check that the first 200 characters survive a "summarize this email in one sentence" test before it ships.

The audit to run before your next send

Pick three sends that went out in the last 30 days and rate each on five things:

- Does the first sentence of the body work as a standalone summary? Read sentence one out loud. If it does not contain a number, a name, or a clear ask, rewrite it.

- Is the explicit value claim inside the first 200 characters of the body, ahead of the brand throat-clearing? Cut anything before it.

- Does the subject line match the body's actual ask, or is it pretending to be more urgent than the content delivers? Misaligned urgency is now a downgrade signal, not just a CTR risk.

- Is there a specific reply-asking line, even on a broadcast send? Reply rate is the metric that survives the bot-open inflation.

- Are alt-text strings on images written like sentences, not "image1.png"? Gemini reads alt as part of the content signal.

If three out of three sends fail two or more of those, you have a structural problem, not a creative one.

I am skeptical any of this is the final word. The summarization layer will get better, the TL;DR tactic will get gamed, and Gemini's ranking signals will keep moving. The honest read is that the senders treating this as a measurement problem first and a creative problem second will probably outlast the ones still chasing inbox placement as the win.

Notice Me Senpai Editorial