Yahoo Got Cited 30,000 Times by AI While Blocking Google's Crawler

Yahoo's robots.txt explicitly disallows Google-Extended, the crawler Google created so publishers could opt out of Gemini training without losing search visibility. By the standard logic of every "block AI bots" guide written in the last two years, that should keep Yahoo content out of AI answers. A new BuzzStream analysis of 4 million AI citations across 3,600 prompts found Yahoo appeared in roughly 30,000 of them. CNBC, which blocks ChatGPT-User, GPTBot, and OAI-SearchBot all at once, showed up 1,298 times. The robots.txt approach didn't reduce citations. It mostly gave the people running it the feeling that something was being done.

The numbers most GEO consultants are about to wish they hadn't seen

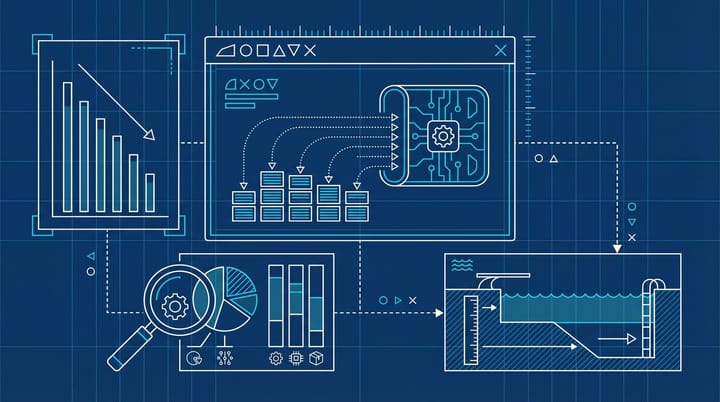

The BuzzStream study, written by Vince Nero and pulling from Citation Labs' XOFU tracking tool, looked at the top 50 news sites blocking each major AI crawler across ten industries. It then checked how often those "blocked" sites still appeared in answers from ChatGPT, Gemini, AI Overviews, and Google AI Mode. The results are uncomfortable for anyone who spent the last 18 months building robots.txt strategies for clients.

- 88.2% of sites blocking GPTBot still got cited

- 92.3% of sites blocking Google-Extended still got cited

- 70.6% of sites blocking ChatGPT-User still got cited

- 82.4% of sites blocking OAI-SearchBot still got cited

Look at it from the other direction and it gets worse. Roughly 95% of ChatGPT citations came from sites that were actively blocking the training crawler. Almost 70% came from sites blocking the live retrieval bot. The crawlers being blocked are not, in any meaningful sense, the ones doing the citing.

The mechanism nobody really wants to spell out

There are a few honest explanations for this and one polite one. The polite one is that AI systems have multiple paths to a webpage: search index data, third-party content licensing deals, social media scrapers, and snapshots taken before the block went up are all in play. The honest version is that "blocking" via robots.txt is a request, not an enforcement action, and the major model providers have strong commercial reasons to interpret those requests narrowly. Cloudflare quietly shipped an enforcement tool called Robotcop in late 2024 precisely because they did not believe the polite interpretation was holding up either. The whole point of Robotcop was to convert robots.txt rules into actual WAF blocks at the network edge, because the standards-based approach was being treated as advisory.

What this means in practice: if you publish on a domain with any kind of public reach, AI systems are seeing your content one way or another. The robots.txt file is closer to a no-trespassing sign at the edge of a property someone has already photographed by drone.

The part where the strategy actively loses you traffic

It gets worse from there. A separate study by Hangcheng Zhao at Rutgers Business School and Ron Berman at Wharton, covering 30 major newspaper publishers from October 2022 through June 2025, found that publishers who blocked AI crawlers in their robots.txt experienced a 23.1% decline in total monthly visits and a 13.9% decline in human-only browsing in the months that followed, according to coverage in PPC Land. SimilarWeb traffic data and Comscore's panel both pointed in the same direction.

Read the two studies side by side and you get a picture nobody wanted: blocking AI crawlers does not reduce citations meaningfully, and it does correlate with material declines in human traffic. The strategy may actually have a negative expected value for most large publishers. Smaller sites probably face different tradeoffs, since the human traffic risk is concentrated in the big domains, but the citation finding still seems to apply to everyone.

What the robots.txt true believers got wrong

Most of the GEO playbooks written in 2024 and 2025 treated crawler control as the lever you pull to manage AI visibility. Block training, allow retrieval. Block everything. Allow everything. Variations on the theme. The implicit assumption was always that there was a cleanish relationship between crawler permissions and what AI systems actually do with your content.

That assumption seems to have been wrong. From what I have seen reading these studies, the relationship is closer to "we will cite you when we want to and the file you wrote is largely advisory." That is not what Google and OpenAI explicitly say in their documentation, but it is what the citation data describes. We covered something adjacent to this last month in our piece on the 11% citation overlap between AI platforms, which already suggested that the mental model of "one GEO strategy fits every model" was a stretch. The robots.txt finding makes it worse: not only is the strategy not portable across platforms, the most common version of it does not even work on the platform it was designed for.

If I had to pick the single most painful number in the BuzzStream data, it is the 92.3% for Google-Extended. Google-Extended is the crawler Google created specifically so publishers could opt out of Gemini training without losing search visibility. Publishers took the deal in good faith. The study suggests the deal did not actually change very much.

The audit worth running this week

Here is what I would actually do, in the order I would do it. None of this requires hiring anyone.

First, pull your robots.txt and list every AI user agent you currently block. If you cannot remember why each one is in there, that is the first problem. Most teams added these rules in a panic in 2023 and have not revisited them since, which means the ruleset reflects 2023's understanding of how AI crawling worked, not 2026's.

Second, run a few of your high-intent commercial queries through ChatGPT, Gemini, AI Overviews, and Perplexity. Note whether your domain shows up. If it does despite your blocks, you already have your answer for that query.

Third, look at your AI referral traffic from the last 90 days in GA4. Filter source/medium for chatgpt.com, gemini.google.com, perplexity.ai, and copilot.microsoft.com. If you are getting any of it, you are being cited. If your robots.txt is set up to "not be in AI" you are quietly contradicting yourself in production, and probably losing some human traffic on top.

Fourth, decide what you actually want. Not what felt safe in a meeting in 2023. The two coherent strategies right now are: be maximally citable (allow crawlers, structure content for retrieval, accept the visibility tradeoff) or be invisible (block at the WAF level via something like Cloudflare's Robotcop or your own firewall, accept that you may be giving up human traffic too). The middle ground, where your robots.txt blocks a list of bots someone in your team read about on LinkedIn in 2023, is the one position the data says does not work. The benchmark to hit on the audit: after the rewrite, your robots.txt should match what a reasonable person looking at your AI referral traffic and your business goals would expect to see. Most files do not.

The part I am still uncertain about

I am not fully convinced the citation rates would hold for sites smaller than the BuzzStream sample. The study looked at the top 50 sites in each block-rule cohort, which skews heavily toward big news publishers. Smaller domains may genuinely be excluded by robots.txt because they have less third-party reach, less syndication, and less of a reason for an AI provider to push the polite interpretation. From what I have seen, results at smaller scale can vary enough that I would not bet the strategy on the news publisher numbers alone. Run your own audit before you change anything.

But the direction is clear enough that anyone still selling "we will write your robots.txt to keep you out of AI" should probably stop. The product does not do what the brochure says it does. PPC Land's writeup of the BuzzStream data is the cleanest summary if you need to send something around internally before next week's standup.

The crawlers were never the lock. Most of us have been guarding the wrong door.

Notice Me Senpai Editorial