The 11% Citation Overlap That Breaks Every Platform-Agnostic GEO Strategy

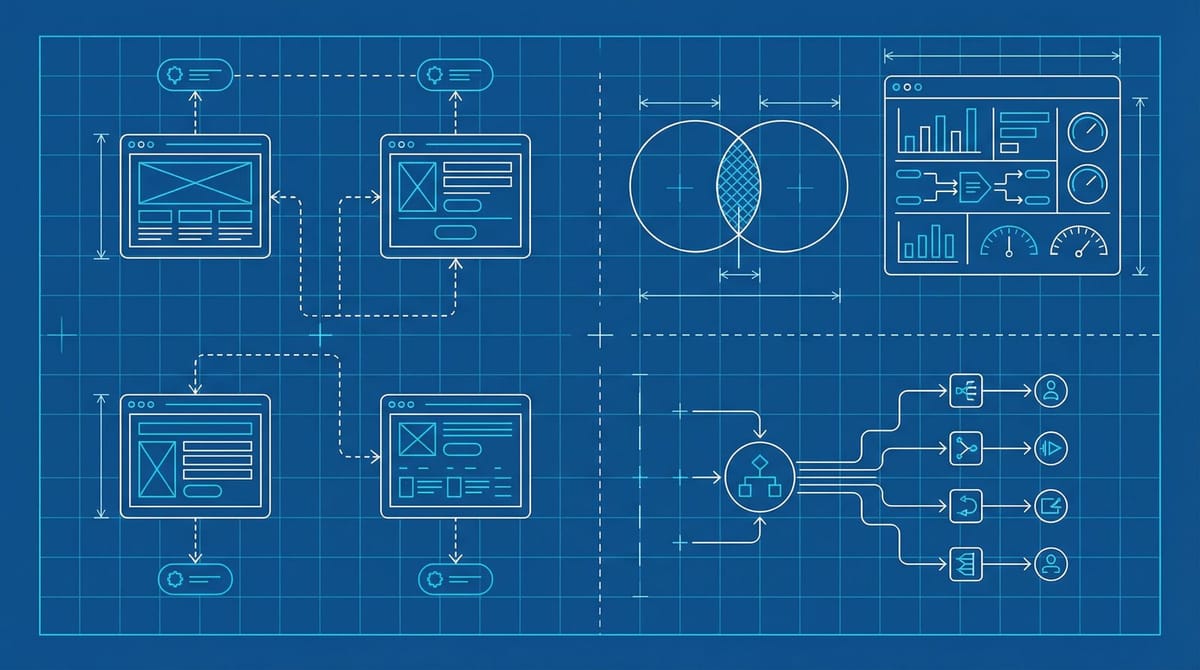

The GEO advice flooding LinkedIn right now has a structural problem. It assumes AI search is one system. ChatGPT, Perplexity, Claude, Gemini. All generating answers. All citing sources. The pitch from every consultancy is roughly the same: optimize your content for AI search and you'll show up everywhere.

Benchmark data from Yext's analysis of 17.2 million AI citations tells a different story. Only 11% of cited domains appear across multiple AI platforms. That's not a rounding error. That's a system design difference that most GEO playbooks are pretending doesn't exist.

Four Retrieval Systems Wearing the Same Jacket

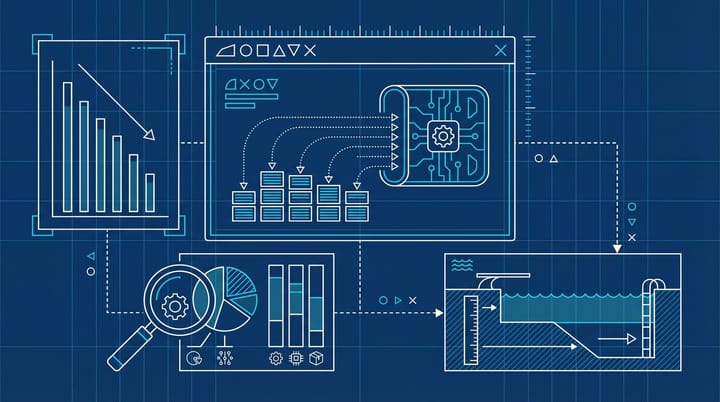

Each AI search platform runs its own retrieval system. And those systems produce very different citation behavior. According to a cross-platform comparison from WhiteHat SEO, Perplexity pulls 21.87 citations per response from a real-time web index. ChatGPT averages 7.92, with 79% of responses drawing from training data rather than live search. Claude averages 5.67 citations and leans heavily toward primary and user-generated sources. Google AI Mode uses 8.34 but pulls from a fundamentally different authority graph than even Google AI Overviews (despite being, you know, the same company).

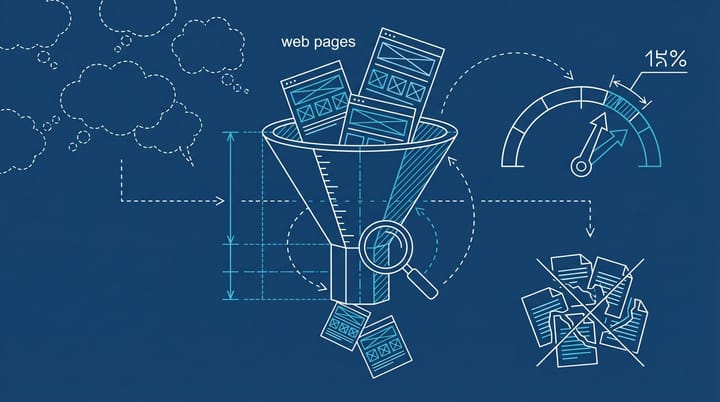

The practical result: a page that Perplexity loves and cites constantly might not register with ChatGPT at all. ConvertMate's study of 12,500 queries across 8,000 domains found that 83% of AI Overview citations come from pages outside the traditional organic top 10. And 28.3% of ChatGPT's most-cited pages don't rank on Google at all. Same category of tool, completely different retrieval logic.

What Each Platform Actually Wants

This is where the "optimize for AI" advice collapses, because each platform responds to different signals.

Perplexity is the freshness engine. Qwairy's platform analysis shows content updated within 30 days gets 3.2x more citations than older material. After 180 days, citation rates drop 30% from baseline. After a year, they're cut in half. Perplexity also pulls from Reddit at roughly 24% of total citations, which is wild when you think about the implications for B2B brands that have zero Reddit presence. If you're not showing up in subreddit discussions about your category, Perplexity might not know you exist.

ChatGPT responds to authority signals and structured content. It leans on Wikipedia for about 5% of citations (more than competitors), and varies its retrieval layer by industry. In hospitality, it cited official hotel websites 38% of the time, roughly double what other platforms did. That kind of industry-specific variation means your ChatGPT visibility is partly determined by which vertical you're in, not just how good your content is.

Claude does something interesting that other platforms don't: it cites user-generated content at 2 to 4 times the rate of competitors. In food and beverage specifically, Yext's research found Claude cited user-generated sources nearly 10 times more frequently than Gemini. It also has the highest attribution accuracy at 91.2%, which means it's pickier about sourcing but more honest about where it got the information. If your brand has strong review coverage, Claude is probably your most favorable platform right now.

Gemini behaves closest to traditional Google. It favors official websites, structured data markup, and Google ecosystem signals like Business Profile completeness. If local visibility matters, aim for 50+ reviews with a 4.2+ star rating. Gemini is the platform where your existing SEO investment transfers most directly, and the one where competitor optimization matters least because the signals are mostly things you already control.

The 80% That Works Everywhere (and the 20% That Doesn't)

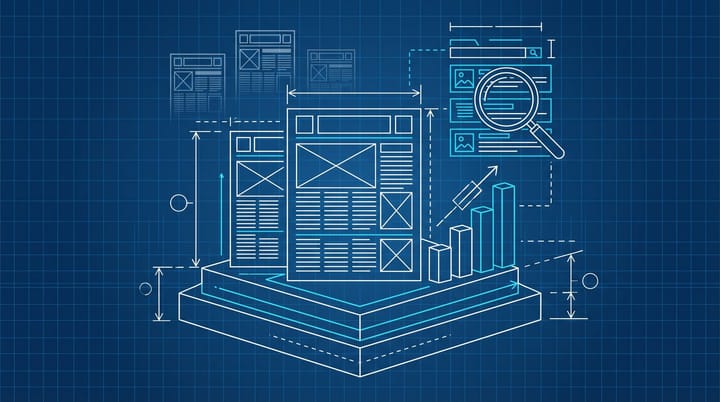

To be fair, there is a shared baseline. GenOptima's Q1 2026 benchmark and the ConvertMate data both point to the same foundational set: a TL;DR in the opening 60 words, clean H2/H3 hierarchy, author credentials visible on the page, Article schema with dateModified, and statistics with cited sources. That baseline covers roughly 80% of what drives multi-platform visibility.

The problem is the other 20%. And for most B2B brands, that 20% is where the competition actually happens. Listicle-formatted content gets cited 5x more often than standard blog posts across platforms, according to GenOptima. Pages with 20,000+ characters get 4.3x more citations than thin content. Original research generates 3.7x higher citation likelihood. Those are the differentiators, but they land differently depending on which platform your audience is using.

We covered this from a different angle recently. The piece on why you can rank first on Google and still be invisible to ChatGPT was about one half of the problem. The more uncomfortable half is that even within AI search, there's no single leaderboard. You have to think about this platform by platform.

The Audit That Actually Tells You Something

If I were advising a B2B team on this, here's what I'd do before spending another dollar on GEO consulting.

First, figure out where your audience actually asks questions. If they're using Perplexity, freshness is your lever. If they're on ChatGPT, authority and structure matter more. If you don't know, check your referral traffic. ChatGPT traffic converts at 15.9% (roughly 9x organic), Perplexity at 10.5% (6x organic). Even small numbers in those referral buckets tell you something about platform preference.

Second, check what your competitors are doing on each platform. Run your top 10 commercial queries through ChatGPT, Perplexity, and Gemini. Write down who gets cited. The 11% overlap number means the competitive landscape looks completely different on each one. You might be invisible on one and dominant on another without knowing it.

Third, match your content update cadence to Perplexity's freshness requirements if that platform matters for your category. Content refreshed within 30 days earns 3.2x more citations. That's a specific, testable number. Set a 30-day content review cycle for your top 20 pages and measure whether Perplexity citations increase over the following quarter.

If you built the DIY AI visibility tracker we covered last week, you can actually measure this platform by platform. That's kind of the whole point of building it.

Where This Gets Uncomfortable for the GEO Industry

Honestly, the part of this that surprises me isn't the data itself. It's how confidently the GEO consulting market has sold a single-strategy solution for what is clearly a multi-platform problem. A lot of these firms are running the same playbook they ran for traditional SEO: audit, optimize, repeat. One strategy, one set of recommendations. The benchmark data suggests that approach has a ceiling, and it's probably lower than most people think.

The 92% of marketers who say they plan to optimize for AI search are mostly planning to do one version of it. The 40.6% who are actually executing are probably doing one version of it. The brands that figure out platform-specific strategies first are going to buy visibility at prices that won't be available in a year. Same pattern as early Meta placement launches, early programmatic, early everything. The fragmentation is the opportunity, but only if you see it as four separate problems instead of one.

I'm not saying you need four completely separate content strategies. That would be absurd for most teams. But if you're treating "AI search" as a monolith, you're optimizing for an average that doesn't exist on any actual platform.