AI Visibility Tools Cost $500 a Month. The Entire Stack Costs $100 to Build Yourself.

The AI search visibility category has a pricing problem, and it is not the one the vendors want you to think about.

If you are tracking how your brand shows up in ChatGPT, Perplexity, Gemini, and Google's AI Overviews, you are probably paying between $200 and $500 per month for the privilege. Profound charges $499/month for 100 prompts. Ahrefs Brand Radar runs $699/month if you want all platforms bundled. Gauge starts at $99 but climbs to $599 for multi-platform coverage. Even Otterly AI, the budget option, hits $489/month at the premium tier.

Julian Hooks, the Director of SEO and AEO at Asurion, published a piece on Search Engine Land this week that should make every one of those vendors a little uncomfortable. He built a custom AI visibility tracker. Five AI surfaces. Custom 5-point scoring. Full data persistence. Total monthly cost: under $100.

He did not hire a developer. He used Replit Agent, described what he wanted in plain English, and had a working tool in a weekend.

The uncomfortable math behind AI visibility pricing

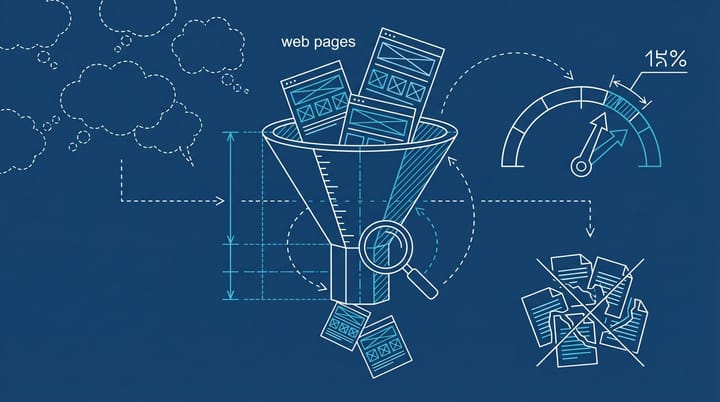

Here is what most AI visibility tools are actually selling you: API access to the same large language models you can query yourself, wrapped in a dashboard with some charting on top. The technical complexity is real, but it is not $500/month real. Not anymore.

Hooks' stack runs on three components. DataForSEO APIs for pulling responses from ChatGPT, Claude, Gemini, and Google's AI features on a pay-as-you-go basis (roughly $0.002 per query in live mode). Replit Agent at $20/month for the development environment. And optional direct API connections to OpenAI, Anthropic, and Google for verification. The combined cost stays comfortably under $100/month even at moderate query volumes.

The real difference between this approach and the $500/month tools isn't data quality. It is customization. Hooks needed a specific 5-point scoring rubric that measured brand inclusion, accuracy, pricing correctness, actionability, and citation quality. No off-the-shelf tool offered exactly that. So he built what he needed, and nothing he did not.

I think this is where the conversation gets genuinely interesting for most marketing teams. The SaaS tools are not necessarily overcharging for the data. They are charging for the abstraction layer: the onboarding, the support, the dashboard that a marketing manager can use without touching code. That has value. But the gap between what the abstraction costs and what the underlying infrastructure costs is enormous. And it is shrinking fast.

This is a vibe coding story, not just a tools story

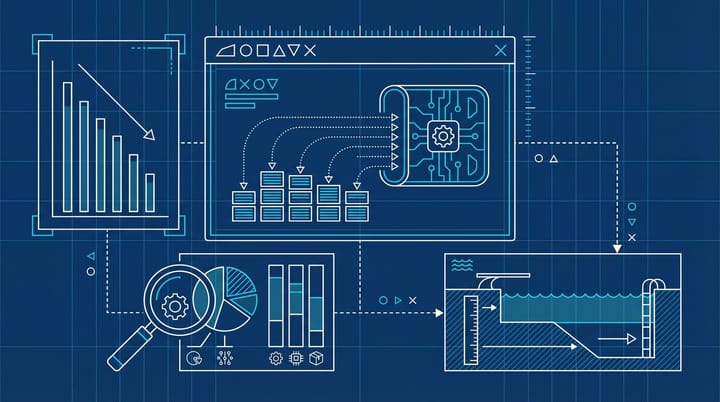

If you are unfamiliar with the term, vibe coding means building applications by describing what you want in natural language and letting an AI agent handle the implementation. You focus on the outcome. The AI writes the code.

The adoption numbers are hard to ignore. According to Second Talent, 63% of vibe coding users identify as non-developers. GitHub reports that 46% of all new code is now AI-generated. Among Y Combinator's Winter 2025 cohort, 21% of startups had codebases that were 91% or more AI-generated.

On paper, that sounds like an endorsement. And in a lot of cases it is. But the quality data tells a messier story. AI co-authored code contains 1.7x more major issues than human-written code, and 45% of AI-generated code samples contain OWASP Top-10 vulnerabilities. So the tool works, but you probably should not run it in production without someone reviewing the output. (This feels obvious, but I have seen enough "it works on my machine" deployments to know it is not.)

For internal monitoring tools, though, the risk profile is genuinely different. You are not shipping customer-facing software. You are querying APIs and storing results in a database. The blast radius of a bug is a wrong data point in your spreadsheet, not a security breach. That changes the calculus on whether vibe coding is appropriate for this particular use case.

What the enterprise tools actually get right

I do not want to give the impression that the $300-$500/month tools are pure margin. Some of them earn their pricing, and it is worth being specific about why.

Semrush's AI Visibility Toolkit integrates with their broader SEO suite, which means your AI visibility data sits alongside your keyword rankings, backlink profile, and site audit. That context is genuinely useful if you are already in their ecosystem. Gauge focuses on actionable recommendations rather than just monitoring, telling you what to change in your content rather than showing you a number.

The problem is that most teams do not need the full suite. They need to answer three questions: is our brand showing up in AI answers, is the information accurate, and how do we compare to competitors for the queries that matter. That is a monitoring problem. And monitoring problems have historically been terrible foundations for premium SaaS pricing. Ask anyone who remembers when uptime monitoring tools charged hundreds per month before Uptime Robot offered a free tier that did 80% of the job.

The analogy that keeps coming back to me is email marketing in 2012. Enterprise platforms charged thousands per month for what was fundamentally an API call to send an email through a relay. Then Mailchimp offered a free tier, Sendy let you run your own instance on Amazon SES for pennies, and the entire category had to justify its existence on workflow and deliverability rather than raw sending capability.

AI visibility tools seem to be approaching that same inflection point.

The five AI surfaces worth actually tracking

Hooks' tracker monitors ChatGPT, Claude, Gemini, Google AI Mode, and Google AI Overviews. That list is not arbitrary. These are the surfaces where purchase-intent queries are actively shifting.

AI Overviews now appear in roughly 30 to 50% of Google searches, depending on whose measurement you trust. The range is wide because methodology matters here, but the direction is not debatable. The click impact is equally clear: organic CTR for queries featuring AI Overviews drops by roughly 35% compared to queries without them. For informational queries specifically, 99% of all AI Overviews are triggered by informational searches. That is the category where your content is most likely competing with a generated answer instead of another website.

If you are not tracking your visibility across these surfaces, you are making decisions about an increasingly large share of your search landscape based on incomplete data.

We covered this problem from a different angle recently. Ranking in the top 10 on Google does not guarantee you appear in AI Overviews. They operate on separate selection logic with different signals. Monitoring traditional rankings and assuming AI visibility follows is a mistake I see teams make constantly, and the gap between the two is getting wider.

How to build one if you have a weekend free

Hooks' five-step framework is worth summarizing because it applies to more than just this one project:

First, write a requirements document before touching any tool. Define the core problem, the features, the data inputs, and the expected outputs. This sounds like overhead. It saves you from rebuilding the thing three times.

Second, ask the AI agent what you are missing. Literally prompt it with "what blind spots does this plan have?" and "what are the most likely technical failures?" before you build anything.

Third, build one feature at a time. Test it. Get it working. Then add the next thing. The temptation to describe the entire project at once is strong and almost always backfires.

Fourth, feed the AI official API documentation links. Do not let it guess at implementation details. Hooks specifically flagged API authentication failures as the most common problem, and the fix is almost always pointing the agent at the actual docs.

Fifth, fork your project before every major change. Save working versions. The ability to roll back is what keeps a weekend project from becoming a weekend of frustration.

Total build time: a weekend. Ongoing cost: $20/month for Replit plus API usage that for most brands monitoring a few dozen queries weekly will land well under $100 total.

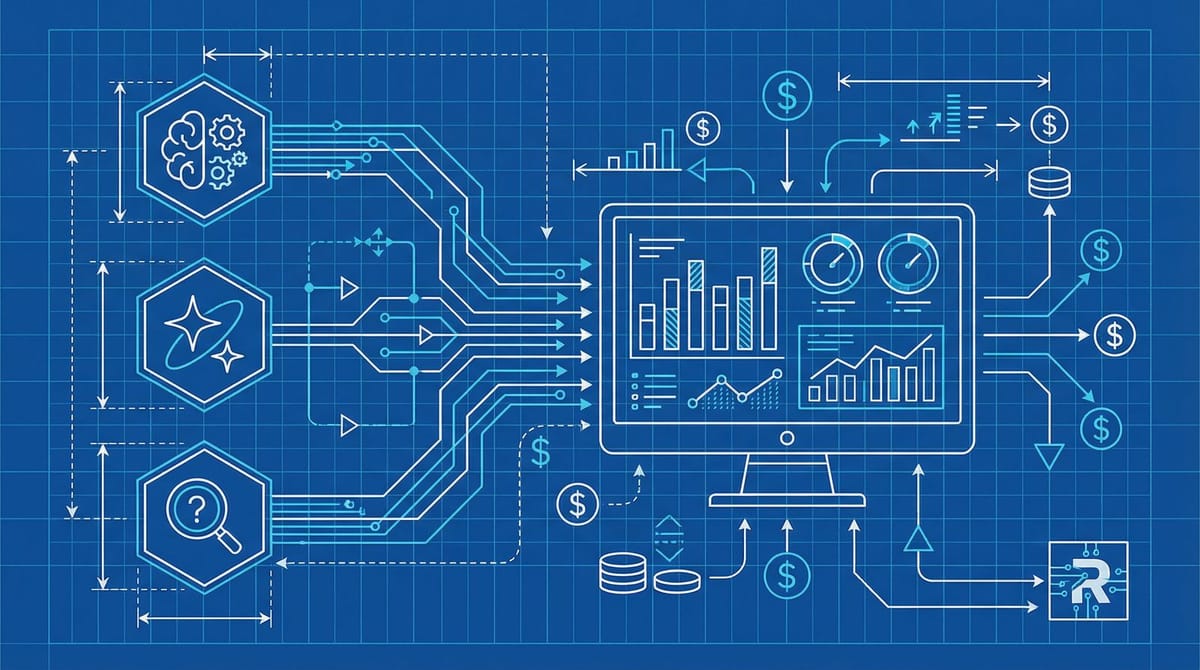

The pricing correction that is already underway

I think by Q4 2026, at least two or three of the current AI visibility SaaS tools will have either dropped their pricing significantly or added free tiers for basic monitoring. The margin structure simply does not survive once enough practitioners realize the underlying cost is $100/month in API calls.

The vendors who keep their pricing power will be the ones who moved up the value chain from monitoring to actionable optimization. Just showing you where you appear is table stakes. The ones who can diagnose why you are not appearing and tell you what specifically to change in your content will justify $300 or $500 per month. Everyone else is selling a dashboard on top of someone else's API.

And honestly, that correction is probably overdue. Charging $500/month to poll ChatGPT's API on a schedule was always going to be a temporary business model. The question was never whether someone would build the cheaper version. It was when.

Notice Me Senpai Editorial