Cloudflare Just Put a Number on What AI Crawlers Cost Publishers

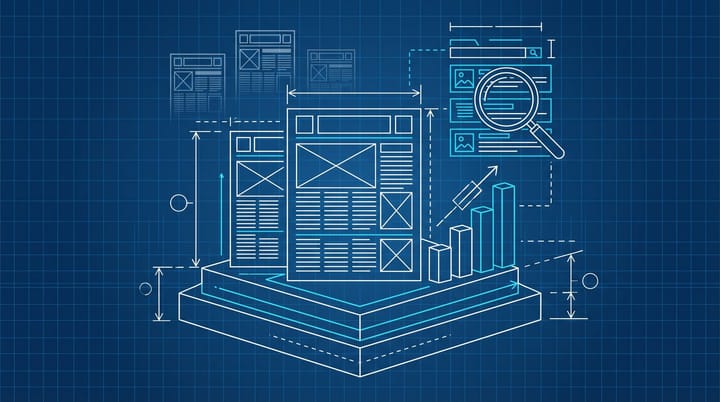

Cloudflare framed its new research with ETH Zurich as "rethinking cache for the AI era," which is the diplomatic way of saying that the economics of running a website just broke for a lot of publishers and almost nobody outside of a few CDN engineers noticed. The paper, co-authored by Yazhuo Zhang, Jinqing Cai, Avani Wildani and Ana Klimovic and presented at ACM's 2025 Symposium on Cloud Computing, buries a number that every publisher and marketer should be quoting. When pure human traffic hits a CDN, the cache miss ratio sits around 17.3 percent. Add just 25 percent AI crawler traffic into that mix and the miss rate nearly doubles to 32.2 percent.

That is not a rounding error. It is a direct line from "AI search is taking some of our traffic" to "our server bill is going up even though fewer humans are visiting."

I have been watching this story bend the wrong way for months, and this is the first paper I have seen that actually quantifies the mechanism. Everyone else is still arguing about referral traffic. This one is about what happens inside the stack, where it hurts.

The cache math nobody priced in

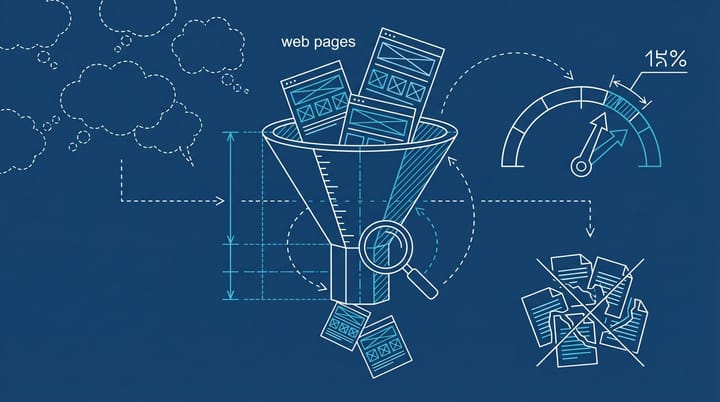

Here is the part the marketing press keeps missing. Traditional CDN caches use LRU (least recently used) eviction, which works because human readers cluster. Ten thousand people read the same homepage, the same top articles, the same trending piece. The cache gets hot for the popular stuff and cold for everything else. That is how CDN economics have worked for roughly twenty years.

AI crawlers do not do that. They scan long-tail content. They visit pages no human has clicked on in six months. According to the published paper, more than 90 percent of pages processed by Common Crawl are unique by content, and AI crawlers maintain a 70 to 100 percent unique access ratio during iterative search loops. What this means in plain English: the pages AI bots hit are almost never the pages already in your cache. So every request becomes a cache miss, which becomes an origin fetch, which becomes a database query, which becomes server load, which becomes a bigger invoice from your hosting provider.

Cloudflare's own network numbers say 32 percent of total traffic now comes from automated sources, and it is processing more than 10 billion AI bot requests per week. That is the scale the 17.3 vs 32.2 delta applies to. The PPC Land writeup of the research pins it at about 4.2 percent of all HTML requests across Cloudflare's network coming from AI crawlers specifically as of late 2025. Small share of the pie. Wildly disproportionate share of the bill.

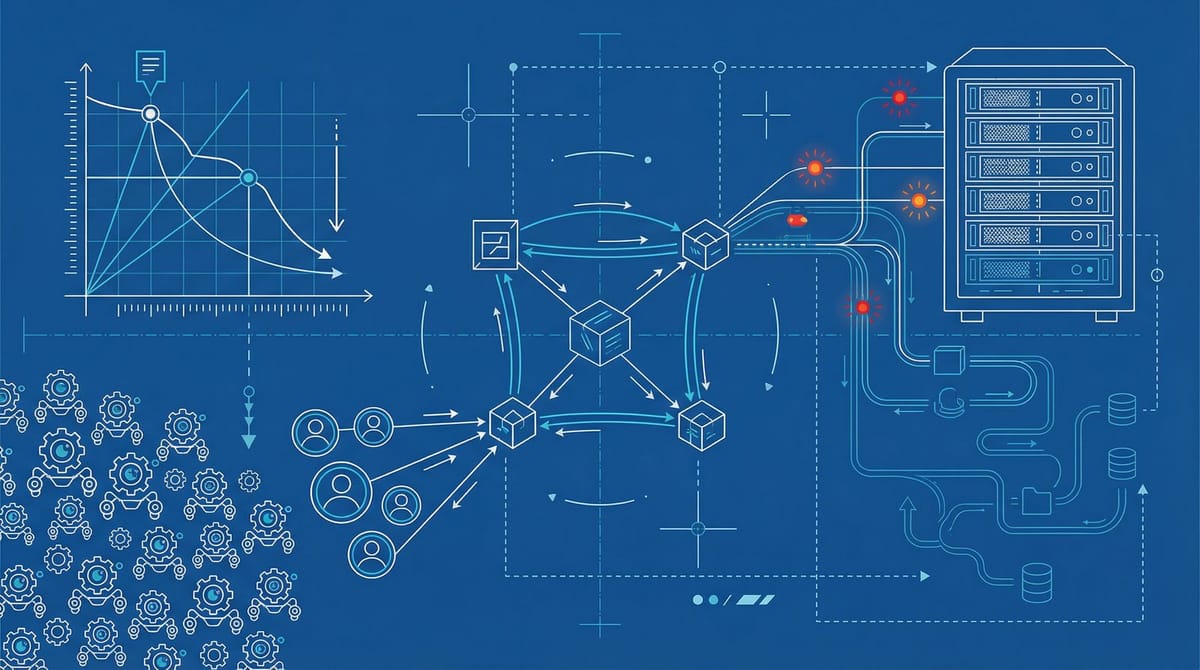

Wikimedia already lived through the cost side

If this sounds theoretical, it is not. Wikimedia published its own postmortem in 2025 showing a 50 percent jump in multimedia bandwidth that was driven almost entirely by AI scrapers. Their internal breakdown is the one that made me sit up: 65 percent of their resource-intensive traffic was coming from bots, but bots were only 35 percent of pageviews. Each bot request was costing them roughly three times more in server resources than each human request. TechCrunch's coverage has the full context.

Read the Docs, which hosts developer documentation and is about the least dramatic kind of website there is, reported hitting 73 terabytes of HTML scraping in a single month from AI crawlers. That is not a content platform. That is a docs host. The kind of numbers nobody budgets for.

The publisher trap that nobody wants to name out loud

Here is what is grim about this, and what I think the coverage this week has been too polite about. Blocking AI crawlers does not make the problem go away. We already covered how Yahoo got cited 30,000 times by AI engines while blocking Google-Extended, and the same pattern holds across most of the AI search stack. The bots that matter for your cache bill are not always the ones you can robots.txt your way out of.

So publishers end up in a bad position. Serving AI traffic costs them real money. Blocking it costs them future visibility in the surfaces where people are actually going to be asking questions. Neither path actually protects the thing they need most, which is a profitable human audience. This is the part that does not fit neatly into a LinkedIn post. There is not a clean answer yet.

In most cases I have seen, publishers treat this as either a tech problem ("add more caching layers") or a policy problem ("block the bots"), when it is closer to a pricing problem. You are subsidizing the AI training pipeline with your infrastructure budget. Cloudflare shipped a pay-per-crawl product last year specifically to address this. Almost nobody is using it yet, which tells you how uncomfortable publishers still are with charging for what used to be free.

Three moves for any publisher staring at a flat traffic graph

This is mostly an infrastructure conversation, but if you are sitting in an in-house marketing seat on the client side of a site you care about, there are three things worth doing by Friday.

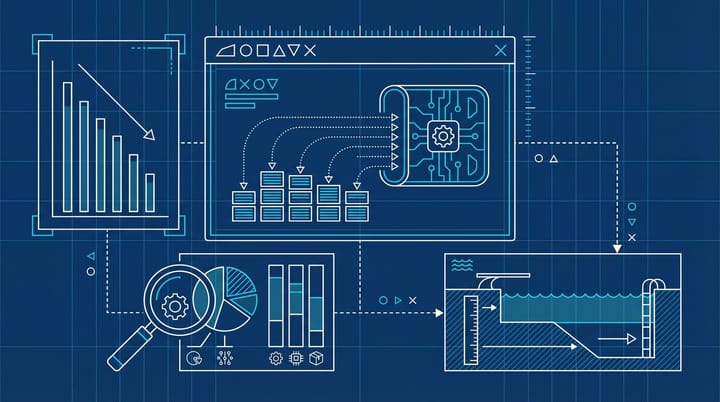

First, pull your hosting or CDN spend for the last six months alongside your human session count. If spend is up and humans are flat or down, you are probably already paying the AI crawler tax. I would want to see a chart of cost per human session over time. That is the real metric. Most dashboards do not show it, which is almost the point.

Second, check whether your stack supports bot-segmented caching. Cloudflare, Fastly, and Akamai all have at least partial tools for separating bot and human traffic, though the configurations are fiddly and nobody has a great preset. If you can route AI bot traffic to a separate origin pool or a read-only mirror, your human visitors stop paying for the scrape load. Your engineering team has probably not prioritized this yet because nobody on the marketing side has asked.

Third, if your site is content-heavy and you are one of the publishers getting hit, take a hard look at the economics of pay-per-crawl. The default reaction is "we would rather not charge bots," but the honest math is that if AI bots are tripling your marginal serving cost per piece of content, you are already charging someone. It is just yourself.

The twenty-year assumption that just broke

I think the biggest shift the paper surfaces is not the cache miss rate. It is that the entire CDN industry was designed around an assumption (content popularity is Pareto-distributed) that AI crawlers completely invalidate. Long-tail is the new head. That is a twenty-year architectural assumption that quietly became wrong sometime in the last eighteen months, and most of us are only now finding out.

From what I have seen, the publishers who notice this early will quietly migrate to tiered architecture, segmenting bot and human traffic at the edge. The ones who do not will keep getting bigger infrastructure bills, shrug, and blame it on "cloud costs going up." And to be fair, cloud costs are going up, but not in the way most teams think.

I do not know exactly what the right pricing answer looks like yet. I would be lying if I said I did. But the 17.3 to 32.2 number is the one I will be pointing at the next time someone tells me AI crawlers are "just bots."

Notice Me Senpai Editorial