Google AI Overviews Are 44% More Likely to Trash Your Brand Than ChatGPT

Most marketing teams spend months optimizing their Google rankings. Fair enough. But I do not think most of them have ever actually asked ChatGPT or Perplexity what those systems think about their brand. And the answer, in a lot of cases, is going to be uncomfortable.

A BrightEdge study published in Fortune analyzed hundreds of millions of prompts across apparel, electronics, and education. The finding: Google AI Overviews surface negative brand information at a 2.3% rate versus 1.6% for ChatGPT. A 44% gap. Sounds small until you scale it. At a million queries, roughly 23,000 negative responses from AI Overviews alone. And unlike a bad Yelp review sitting on page three, these show up first, before the user clicks anything.

BrightEdge CEO Jim Yu put it plainly: instead of negative content living on back pages where nobody scrolls, AI is pulling it directly into the front page where consumers see it first. Google disputed the methodology, claiming the actual difference is closer to 1%. Even if Google is right, that is still thousands of queries per million where AI is actively editorializing against your brand. (When ChatGPT gets asked to directly compare two products, it actually becomes more negative than AI Overviews, which is its own kind of problem.)

The narration problem nobody budgeted for

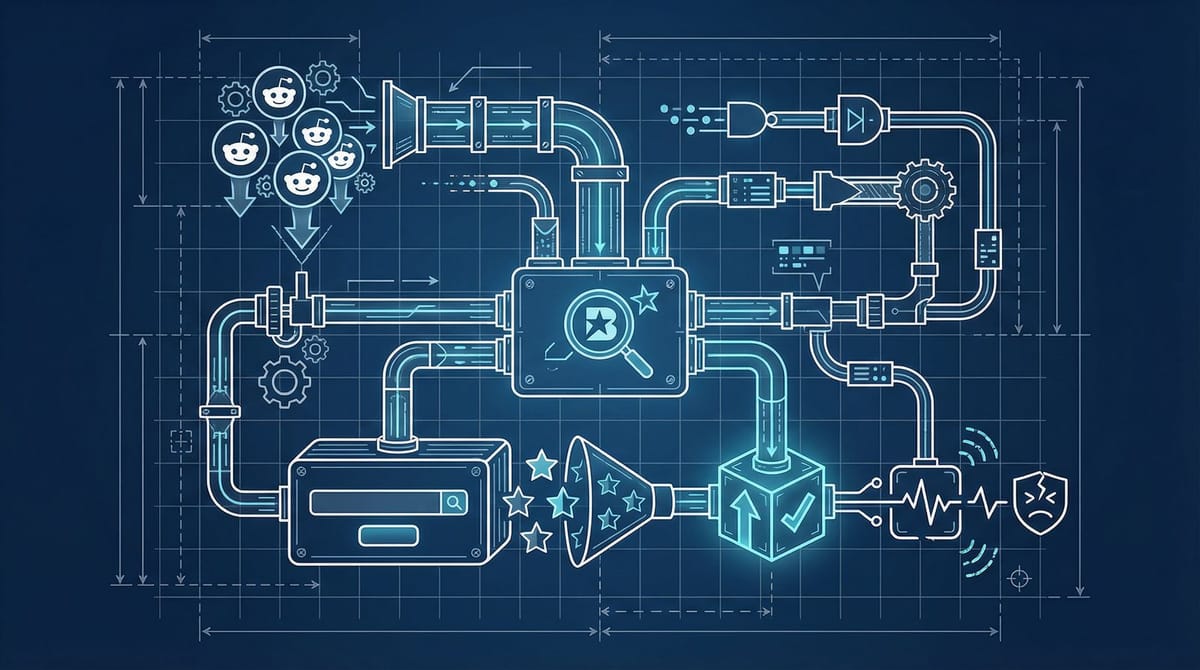

There has been a lot of coverage this year about ranking well on Google and still being invisible to ChatGPT. That visibility gap is real and we have covered it extensively. But what Anthony Will at Search Engine Land described this week is a different, arguably worse problem: AI search systems do not just overlook your brand. In a lot of cases, they actively rewrite your story.

The mechanism is something Will calls "AI narrative formation." AI systems pool sources from Reddit, YouTube, review platforms, and social media rather than sticking to authoritative sources. They weight high-volume content regardless of quality. Then they compress whatever they found into a single confident summary. Because people screenshot and share AI answers, those summaries get reinforced in a feedback loop that most brands never even see.

Think of it like a game of telephone where you never got invited to play. Someone posts a complaint on Reddit in 2018. An AI reads it in 2026, compresses it alongside three other signals, and serves a confident summary to a potential customer who will never see your side of the story.

The most repeated claim wins. Not the most accurate one.

A 4.2-star brand with a reputation problem it did not know about

Will described a finance company with a solid 4.2 Trustpilot rating. Under traditional search, the brand looked great. Google AI Overview pulled in a decade-old negative Reddit thread about customer service issues that had long since been resolved, then combined it with the absence of recent structured content. The result was a semi-negative AI summary for anyone who asked about the company. The actual reputation was fine. The AI-generated version was not.

This is where it gets uncomfortable for marketers who have been focused on rankings. In traditional search, you could push negative results down with better content. There was a playbook everyone knew. AI search does not work that way.

There is no page two in AI search.

The AI generates one answer, and for a lot of users, that answer is the brand. According to research compiled by Am I Cited, companies have reported up to 10% traffic losses when AI systems misrepresent their products. With roughly 60% of searches now ending without a click when AI summaries appear, users are accepting these synthesized answers as fact and moving on. No cross-checking. No clicking through to verify.

The 15-minute audit nobody is doing

If I were on your team, here is what I would do today.

Open ChatGPT, Perplexity, and Google (with AI Overviews enabled). Ask each one: "What is [your brand]?" Then: "Is [your brand] good?" Then: "What are the problems with [your brand]?" Document every answer.

You are looking for three things:

Factual errors. Wrong founding date, incorrect product descriptions, outdated pricing, fabricated features. AI systems hallucinate these with full confidence. A lawyer was disciplined for citing hallucinated legal cases from ChatGPT in court filings. Your brand description is getting the same treatment, just without the courtroom accountability.

Narrative gaps. Places where the AI has no positive content to pull from, so it defaults to whatever negative signal it can find. This is the decade-old Reddit thread problem. If your most visible unstructured content is complaints from 2018, that is what the AI will surface.

Competitor contamination. AI systems sometimes blend brands together, especially in crowded categories. If ChatGPT is describing your product using your competitor features, or worse, their complaints, that is more common than you would think. Bayer recently discovered their AI-generated content was accidentally advertising for competitors, and most brands have not even checked for it.

Fixing the inputs, not the outputs

You cannot edit what an AI says about you. You can only change what it reads.

The practical fix has two parts. First, create structured first-party content that AI systems can reliably parse: FAQ pages with schema markup, accurate Wikipedia and Wikidata entries, and recent press coverage on authoritative sites. Michael Brito has written about this as treating AI search like a brand reputation channel, not just a traffic channel. I think that framing is right, even if most organizations are not structured to act on it yet.

Second, address the unstructured signals. That decade-old Reddit thread? Respond to it. Not defensively, just factually. AI systems weight recent engagement, so a 2026 response on a 2016 complaint changes the signal balance. Same approach for outdated review platform profiles. Update them. Add recent customer evidence. What you end up with is a slowly shifting input layer, and honestly, it takes a while before the AI models reflect the changes.

If you want to go deeper on monitoring, we broke down how to build an AI visibility tracking stack for under $100 a month. The same approach works for reputation monitoring: set up recurring prompts, track changes weekly, document the narrative drift. The research suggests early intervention reduces reputation damage by 70-80% compared to delayed responses. Most brands are not detecting these problems at all.

Your Trustpilot score does not matter if the AI never reads it

Traditional reputation management assumed humans would see your full profile, read multiple reviews, weigh the balance. AI does not do any of that. It grabs a handful of signals, compresses them into a sentence, and moves on. A 4.2-star Trustpilot rating is meaningless if the AI is instead pulling from a Reddit thread your team forgot existed.

I think most brands are somewhere between six and twelve months behind on this. The companies already doing AI reputation audits tend to be in fintech and healthcare, where being misrepresented has immediate and expensive consequences. Everyone else seems to be treating AI search like a future problem.

Honestly, I do not think it is a future problem anymore. There are roughly 23,000 negative AI-generated brand responses happening per million queries right now, on just one platform. The 15-minute audit costs nothing. The gap between what AI says about your brand and what is actually true might cost quite a lot more.