Google's TurboQuant Just Took the 30-Result Cap Off RankBrain

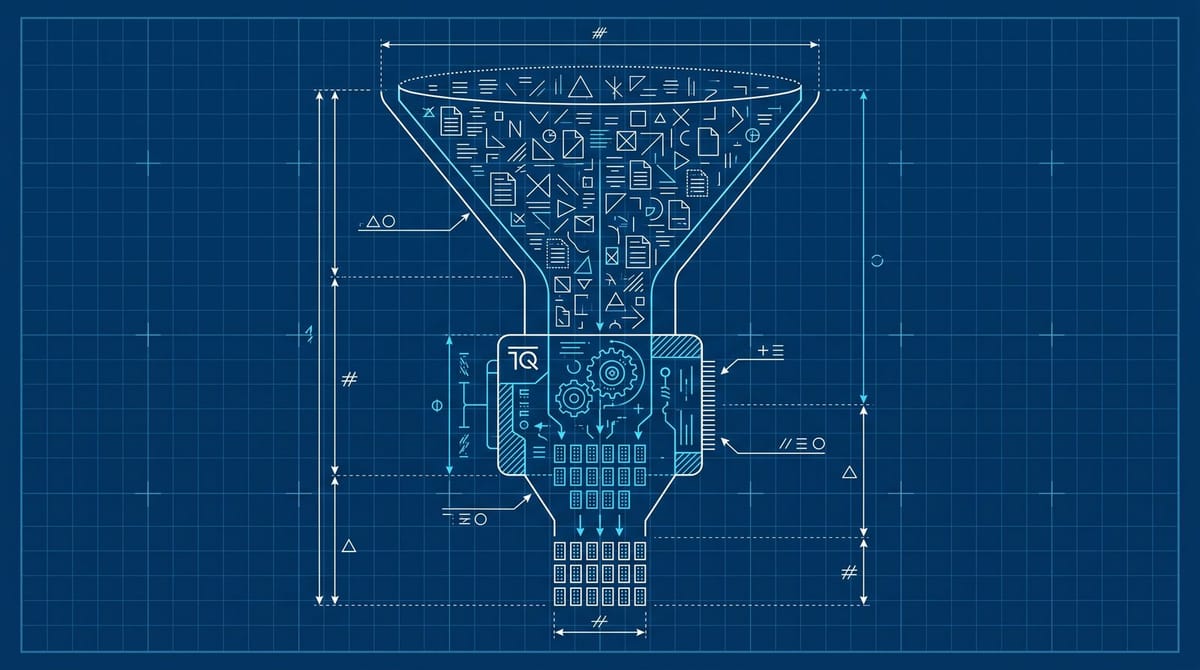

Google Research published TurboQuant on March 24, 2026, a quantization algorithm that compresses vector embeddings about 4x with near-zero indexing time. That cost cut matters because Pandu Nayak testified under oath that RankBrain runs only on the final 20 to 30 candidate pages because it's too expensive to apply more broadly. The ceiling on Google's ranking candidate set is now a budget choice, not a hardware constraint.

The 20-30 wall was a cost decision, not a quality one

The single most important fact about how Google ranks is one most SEOs still don't internalize. RankBrain, the deep-learning component the industry has spent a decade building theory around, only ever sees the last 20 to 30 candidates. In DOJ testimony, counsel asked Pandu Nayak directly whether "RankBrain is too expensive to run on hundreds or thousands of results," and Nayak answered "that is correct." Counsel then asked if that's "one of the reasons why you just wait until you're down to the final 20 or 30 before you run RankBrain," and Nayak confirmed it again.

That reframes the entire ranking stack. Cheap signals like BM25 and other classical retrievers pick the candidate set. The fancier neural ranker only ever evaluates pages that already cleared a much simpler filter. If your page never makes the candidate set, none of your structured data, helpful-content tuning, or AEO-shaped headers gets a chance to matter. The loss happens before Brain, not at Brain.

This is also why Sundar Pichai keeps describing Google as supply-constrained on memory in earnings calls. There's no way, he told analysts, the leading memory companies dramatically improve their capacity. Google can either pay the linear cost of more neural evaluations or compress harder. The company went with compress harder.

TurboQuant is the patch that closes the math gap

The Google Research write-up for TurboQuant came out in March, paired with an ICLR 2026 paper called "Online Vector Quantization with Near-Optimal Distortion Rate." The headline numbers are that it shrinks high-dimensional embeddings to roughly 3 to 4 bits per element while staying within about a factor of 2.7 of the theoretical lower bound for any algorithm at that bit budget. For nearest-neighbor retrieval, the practical win is that it beats Product Quantization on recall while collapsing the indexing time, because the codebooks are precomputed and data-independent.

Translation for marketers: the cost of running a smarter candidate-selection step just dropped meaningfully. The same memory budget that previously allowed RankBrain on 30 pages can now support a neural reranker on a much wider pool, or a richer first-stage retriever that pulls a broader set into contention. Search Engine Land's coverage of the algorithm in March made the same point about vector search infrastructure: indexing cost has been the brake on neural retrieval at web scale, and that brake just got smaller.

I think the realistic read here isn't that Google suddenly runs RankBrain on a million pages. It's that the bar for which pages are even allowed to compete drops. The top 100. Maybe the top 200. That's still not the long tail. It's still meaningfully different from 30.

Why this widens the playing field for smaller sites

The dominant theory in SEO is that authority compounds in a winner-take-all way. That theory is partly an artifact of the 20-30 candidate cap. If only thirty pages get evaluated by the part of the system that understands meaning, the pages that already cleared the cheap-signal filter (which heavily favors links, age, and brand) eat everything. Smaller sites get filtered out before the model that might have rewarded them ever runs.

Widen the candidate pool from 30 to a couple hundred and the dynamics shift. Pages with strong topical relevance but weaker classical link signals get a shot at the neural ranker. Pages that match the query intent precisely beat pages that match it loosely with more authority. From what I've seen tracked across a few independent SEO audits, the queries already loaded with mid-funnel intent, like comparison searches and product-spec searches, are where a shift like this would land first. The head terms still belong to brands.

It also explains, in retrospect, why Ahrefs's 1,885-page schema study found schema doesn't meaningfully lift AI citations on its own. AI Overviews and AI search are doing a wider retrieval already. Schema as a tiebreaker on a narrow candidate set is one thing. Schema as a signal inside a much broader pool is closer to noise.

The signal that tells you it's happening

Google won't announce a change to how many candidates feed RankBrain. The first read will come from your server logs, because the retrieval surface for AI search is already wider than classical search. Look for these user agents hitting pages you don't rank for organically:

- OAI-SearchBot (and ChatGPT-User 1.0 / 2.0 / 3.0)

- Claude-SearchBot

- PerplexityBot

- Applebot Extended

According to Search Engine Journal's reference list, these are the declared retrieval user agents you can verify against published IP ranges. The point isn't volume. It's coverage. AI retrieval pulls a broader set of pages into the candidate pool than classical search ranks, and the gap between which pages AI engines fetch and which pages rank in Google's top 30 is your early read on what a wider Google candidate set might look like in practice.

The check worth running this week: grep your access logs for the four user agent strings above, then cross-reference the URLs they hit against your Search Console "pages with impressions" export over the same window. Pages that AI engines retrieve but Google doesn't rank are your candidate-set-but-not-final-set list. That's the bucket that gets bigger if the cap loosens.

Where to spend the next hour

Pull a week of access logs. Filter for OAI-SearchBot, Claude-SearchBot, and PerplexityBot. Export the URL list. Then export Search Console pages-with-impressions for the same week. The diff is your candidate-but-not-ranking set, and that's the bucket to optimize for retrieval-friendliness rather than ranking-friendliness. Clean primary entity inside the first 100 words. One specific claim per paragraph. No rhetorical setup at the top. The retrieval layer is reading for clarity, not for narrative.

I'd hedge a little on timing. TurboQuant being shippable inside Google's stack is different from it being deployed in production search. Research papers don't always land in core ranking on a fast timeline, and the antitrust pressure cuts both ways here, since widening the playing field happens to be exactly the remedy DOJ has been pushing on Google for two years. My read is that we see soft signals before any algorithm announcement: a slow drift in who shows up for mid-funnel queries, a few unexplained "we suddenly started ranking again" posts on r/SEO, the usual fingerprints of an underlying retrieval shift Google won't confirm.

The job for the next quarter isn't to chase a future ranking factor. It's to know which of your pages already make AI search's candidate set, and to make sure those same pages would survive a slightly less classical first-stage filter on Google too. Anyone who's done that quietly has the option to ride the change. Everyone else finds out from a Search Engine Roundtable post three months after it ships.

Notice Me Senpai Editorial