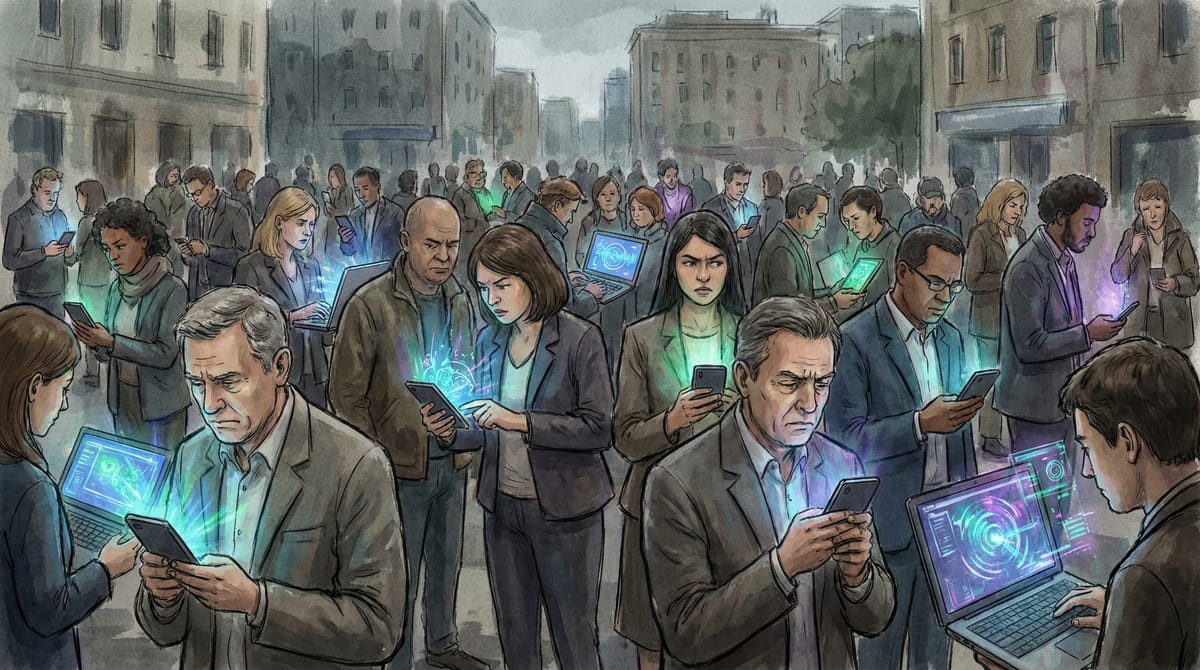

More People Are Using AI. Fewer People Trust It. Marketers Should Pay Attention to the Gap.

A Quinnipiac University poll published this week found that 73% of Americans have now used an AI tool, up from 67% a year ago. At the same time, 76% say they trust AI-generated results rarely or only sometimes. Only 21% trust them most of the time.

Those two numbers moving in opposite directions is the part worth sitting with. People are using AI more, and trusting it less. That's not early-adopter skepticism. That's a consumer base that has tried the product, formed an opinion, and decided it's unreliable. For marketers building strategies around AI-powered tools, recommendations, and content, this gap is a problem that gets bigger the more you ignore it.

The trust numbers are worse than the headlines suggest

The Quinnipiac data is striking on its own, but it's consistent with a pattern across multiple surveys. Pew Research's March 2026 study found that half of US adults say increased AI use in daily life makes them more concerned than excited. Only 10% lean toward excitement. The remaining 38% are somewhere in the middle.

A separate consumer insights survey puts daily AI usage at 32%, with 54% using it as a research assistant. So people are depending on AI for information regularly while simultaneously telling pollsters they don't trust the information it gives them.

And it gets more specific in professional contexts. A 2026 survey of US engineers found that 86% use AI tools in their work, but only 6% trust the results without verification. Six percent. The Quinnipiac professor who led the poll called it "a contradiction between use and trust," and I think that undersells it. It's not a contradiction. It's people using a tool they know is unreliable because the speed advantage is worth the verification cost. For now.

Why this matters if you run marketing campaigns

If you're using AI-generated content in your marketing (and statistically, you probably are), your audience is increasingly primed to distrust it. Not because they've read think pieces about AI ethics, but because they've personally asked ChatGPT or Gemini a question and gotten a wrong answer. That firsthand experience is harder to undo than abstract skepticism.

This plays out in a few concrete ways.

AI-recommended products get less automatic trust. If your brand shows up in a ChatGPT shopping recommendation or a Gemini product comparison, the consumer seeing that recommendation is more likely to verify it independently than they were a year ago. The conversion path from AI recommendation to purchase is getting longer, not shorter. Building your strategy around AI discovery without accounting for the verification step will overstate the channel's value.

AI-generated ad copy is starting to pattern-match for consumers. I don't have hard data on this one, so take it as editorial opinion. But the linguistic patterns of AI-generated content (the consistent rhythm, the structured optimism, the specific way it handles transitions) are becoming recognizable to regular people who use these tools daily. If your audience uses ChatGPT every day, they're developing an instinct for what AI writing sounds like. Your ad copy that was written or heavily assisted by AI may trigger that recognition in ways it wouldn't have a year ago.

Trust signals from humans matter more, not less. Practitioner testimonials, named case studies, specific results from identified companies. The stuff that's expensive and slow to produce. McKinsey's 2026 AI trust report frames the trust gap as a phase in adoption, and they might be right long-term. But right now, in 2026, the data says consumers use AI and don't trust it. That means human-verified, human-attributed content is a competitive advantage in a way it wasn't two years ago.

The uncomfortable question for AI-heavy marketing stacks

If your content strategy relies significantly on AI generation, and your audience increasingly distrusts AI-generated information, at what point does the efficiency gain get offset by the trust cost? I genuinely don't know the answer. It probably depends on your category, your audience demographics (Pew found women are twice as likely as men to be concerned about AI), and how visible the AI involvement is in your output.

But the trend line is clear enough to act on. The gap between AI usage and AI trust is widening, not narrowing. Consumers are getting more experienced with these tools, not less skeptical. Planning your 2026 marketing strategy around the assumption that trust will catch up to adoption is a bet. And right now the polling data doesn't support it.