Google Wants Publishers to Optimize for AI Agents That Send Under 1% of Traffic

Addy Osmani, Google's Director of Engineering for Cloud AI, published a six-layer framework called "Agentic Engine Optimization" on April 15, 2026, advising publishers to restructure content for AI agent consumption. The framework covers token-optimized pages, Markdown formatting, and machine-readable discovery files. According to EMARKETER data, major publishers cited by AI platforms currently receive under 1% of their referral traffic from those systems.

Osmani's Six-Layer Stack, Translated

The AEO framework boils down to one core idea: make your content cheaper for AI to read.

Layer 1 is a robots.txt audit to check whether you're accidentally blocking AI crawlers. Layer 2 is llms.txt, a Markdown index at your domain root describing pages with token counts. Layer 3 is skill.md, a capability file declaring what your API or content does. Layer 4: serve clean Markdown alongside HTML to reduce parsing noise. Layer 5: surface token counts as page metadata. Layer 6: a "Copy for AI" button that gives agents clean clipboard access to your content.

That's a lot of infrastructure for a traffic source that, right now, amounts to a rounding error in most analytics dashboards.

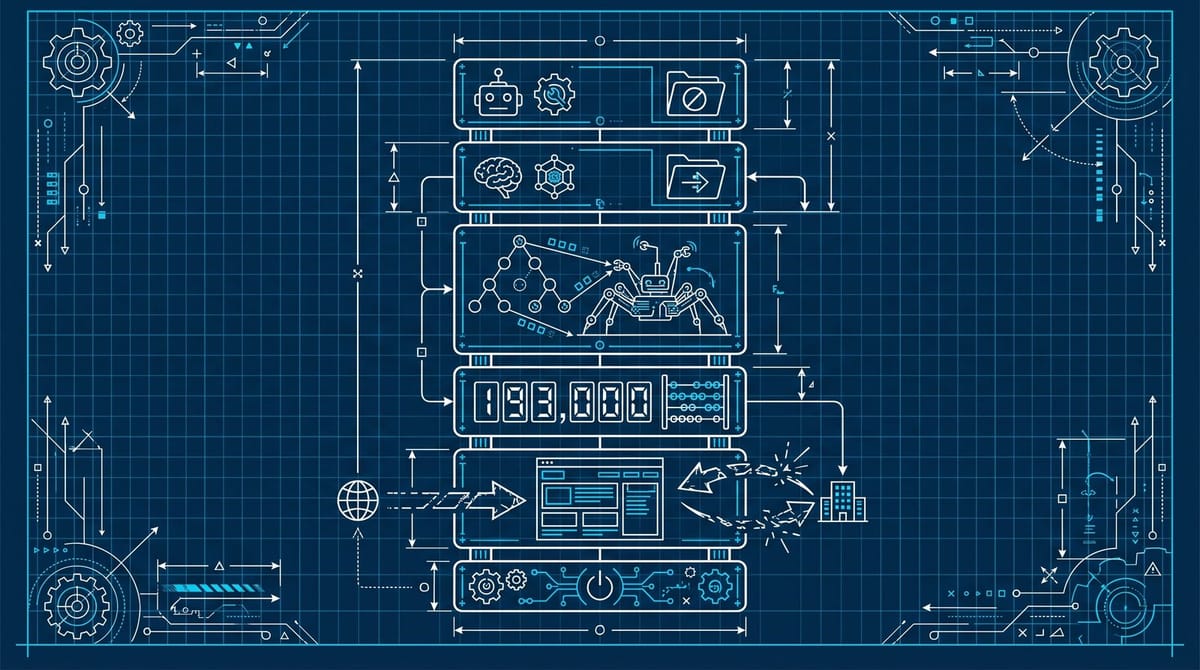

Osmani does identify a real problem, though. AI agents collapse multi-step browsing into single requests. They don't scroll, don't click, don't trigger your scroll-depth analytics. His example: Cisco's Secure Firewall documentation runs 193,217 tokens (about 718,000 characters), which exceeds most agents' context windows entirely. Agents hitting pages like that are truncating content, skipping sections, or hallucinating the parts they can't fit. His recommended page targets are practical: quick-start pages under 15,000 tokens, API references under 25,000, conceptual guides under 20,000.

That part is useful whether or not you care about the broader AEO pitch.

Google Search Doesn't Use Any of This

This is where things get uncomfortable. Google Search doesn't use llms.txt files. John Mueller, Google's own Search Advocate, has been vocally skeptical of the entire concept. On Bluesky, Mueller called creating separate Markdown pages for LLMs "such a stupid idea." His reasoning: LLMs have trained on standard HTML since the beginning. They parse it fine. If format mattered, AI companies "would be very vocal about that."

Mueller went further, comparing llms.txt to the keywords meta tag, a relic search engines abandoned years ago because it was too easy to game.

So you have two Google employees publicly disagreeing. Osmani, from Cloud AI, says build a six-layer optimization stack for AI agents. Mueller, from Search, says stop making special pages for machines. The distinction matters: Osmani is thinking about AI coding agents that consume developer documentation (Claude Code, Cursor, Windsurf). Mueller is thinking about Google Search and whether this helps publishers rank. They're addressing different systems, but the SEO community is hearing "Google says do AEO" without catching the asterisk.

I think that's the single biggest risk here. Someone reads the Search Engine Land headline, sees "Google AI Director outlines new content playbook," and starts building Markdown mirror sites thinking it'll help their Google rankings. It won't. Osmani himself notes this distinction in his post, but it's easy to miss when the headline travels without the caveat.

The Traffic Problem Nobody Wants to Quantify

Even if you're optimizing specifically for non-Google AI platforms, the math is rough. EMARKETER data shows major publishers like Reuters and The Guardian receive less than 1% of their referral traffic from AI platforms despite being frequently cited. Google still processes 417 billion searches per month versus ChatGPT's 72 billion messages. The scale difference is enormous, and the referral model is fundamentally different: Google had incentive to send you traffic. AI platforms are designed to synthesize answers, not distribute clicks.

There's a more concerning number in the data: 40 to 60% of cited sources change month-to-month across Google AI Mode and ChatGPT. So even if you optimize and get cited, citations are volatile. You're not building a durable ranking the way you would on page one of Google.

Washington Post data complicates the picture slightly. Their AI platform visitors convert to subscriptions at 4 to 5 times the rate of traditional search visitors.

The traffic volume is tiny. The traffic quality might be unusually high. Those are two very different problems to optimize for.

That conversion premium is worth tracking. But it's a single data point from a major newspaper with an unusually strong subscription brand. For most content sites, the referral math still doesn't justify a six-layer infrastructure build. Forbes lost 37% of its traffic and pivoted to selling wine. The pressure to find new traffic sources is real. I just don't see evidence that AEO is the source to chase, not yet.

Two Things Worth Stealing from the AEO Stack

From what I've seen, there are maybe two things in Osmani's framework worth implementing today.

First, the token budgeting. Not because AI agents specifically need it, but because shorter, structured pages are better for everyone. If your documentation pages run 193,000 tokens, humans aren't reading all of that either. Restructuring long pages into focused, outcome-led sections is just good content architecture. Osmani's recommended limits (15,000 tokens for quick starts, 25,000 for API references) work as reasonable readability targets with or without AI in the picture.

Second, checking your robots.txt. A lot of publishers reflexively blocked AI crawlers in 2024 and 2025. If you've decided you want AI citations, make sure you haven't shut the door on the crawlers you're trying to attract. That takes five minutes.

Everything else in the AEO stack feels premature. Building llms.txt files, adding token-count metadata, shipping "Copy for AI" buttons, writing skill.md capability declarations. Osmani even released an audit tool called agentic-seo alongside the post, packaging this as something you should scan for today. These are solutions looking for a problem that might arrive in 18 months, or might not arrive at all. 31.3% of the US population is projected to use generative AI search this year. That's meaningful adoption. But user adoption doesn't automatically mean publisher traffic, and right now the referral data says it doesn't.

The Bet You're Actually Making

If you build the full AEO stack today, you're betting that AI agent traffic follows the same curve mobile traffic did from 2010 to 2013: tiny at first, then suddenly the majority. Not a crazy bet. But mobile had a clear mechanism (people bought phones) and a clear referral path (they visited your site through a mobile browser). AI agents have a consumption mechanism without a referral mechanism. They read your content, synthesize it, and whether they send the user back to you depends entirely on the AI platform's design choices. You have zero control over that decision.

What Osmani is describing, stripped to its core, is supply-chain optimization. Publishers become efficient content suppliers for AI systems. Whether those systems send traffic back is a question nobody in the AEO conversation is answering with data.

I'd bet AI referral traffic won't cross 3% of total publisher traffic before Q1 2027. The send-back mechanism doesn't exist in most AI products yet.

My move today: fix page structure for readability (not for agents), unblock robots.txt if you've locked out AI crawlers, and set up referral tracking. Osmani helpfully lists the UTM parameters to watch: chatgpt.com/organic, claude.ai/referral, labs.perplexity.ai/referral. Track those for six months. If AI traffic starts showing up in a meaningful way, you'll have data to justify the infrastructure. Optimizing blind for a channel that sends almost nothing is how marketing teams burn entire quarters on work that was never going to pay off.

By Notice Me Senpai Editorial