Google's TurboQuant Could Let Search Read Hundreds of Pages Per Query Instead of 30

Google published a research paper last week introducing TurboQuant, a compression algorithm that makes vector search dramatically faster and cheaper. The coverage has focused on the technical achievement. The part that matters for anyone doing SEO is what happens when Google can semantically evaluate hundreds of documents per query instead of the 20-30 that current systems handle efficiently.

That shift, if it ships in production, would change which content actually gets surfaced. And the direction it pushes is not what most SEOs expect.

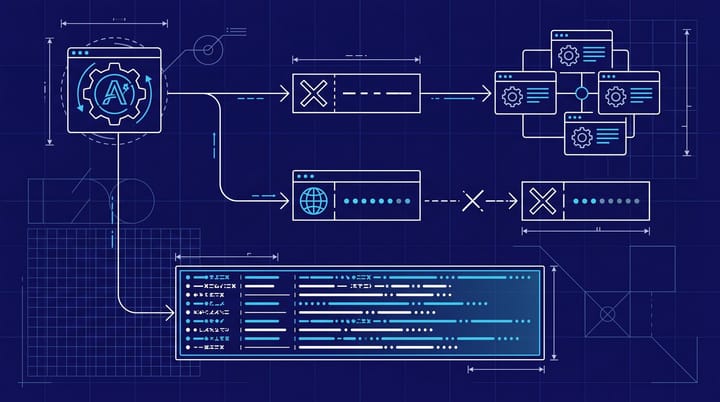

What TurboQuant actually does

Vector search is how modern search engines understand meaning. Instead of matching keywords, the system converts your query and every document into mathematical representations (vectors) and finds the closest matches. The problem is that comparing millions of these vectors is expensive, both in computing time and memory. So search engines take shortcuts: they narrow the candidate set first with cheaper signals, then run the expensive semantic comparison on a small subset.

TurboQuant attacks this bottleneck directly. The algorithm compresses vector representations through mathematical rotation (think of it as reorganizing cluttered data into compact, orderly structures) and adds a 1-bit error correction signal to preserve accuracy despite the compression. The result, according to the research paper, is "virtually zero" indexing time for building searchable vector databases.

That means Google could process and compare vastly more documents in the same time window. Not a marginal improvement. A structural one.

The 20-30 document bottleneck

During the DOJ antitrust trial, Google testified that its ranking system evaluated roughly 20-30 documents deeply per query using RankBrain. Everything else was filtered by faster, less sophisticated signals first. That is the practical reality of how search has worked: the algorithm identifies which 20-30 pages deserve deep semantic analysis, and the rest get assessed through simpler ranking factors like links, page authority, and keyword matching.

Marie Haynes, writing for Search Engine Journal, argues that if TurboQuant ships in production search, Google could run "massive-scale semantic search" across hundreds of results. Instead of pre-filtering down to 30 and then evaluating meaning, the system could assess semantic relevance across a much larger candidate pool from the start.

That is a genuinely different model of search ranking. And it has implications that cut in a specific direction.

Content depth gains ground, traditional signals lose some

If Google can afford to semantically evaluate 300 documents instead of 30, the filters it uses to narrow the initial candidate set become less important. Those filters include backlink profiles, domain authority, and exact keyword matching. They have been useful specifically because someone had to decide which 30 pages deserved the expensive semantic analysis. If that constraint loosens, the expensive analysis (semantic relevance, topical depth, answer quality) becomes the dominant ranking signal earlier in the process.

I am not saying links stop mattering overnight. But the relative weight probably shifts. A page with thin content and a strong backlink profile might have survived the 30-document filter in the old system. In a system that semantically evaluates hundreds of candidates, that page has to actually be the best answer to the query. Not just the best-linked one.

Haynes makes a related point about AI Overviews. If TurboQuant reduces the computational cost of generating those AI summaries, Google can expand them to more query types and draw from a broader, more precise set of sources. The practical effect: AI Overviews that are more accurate and appear on more queries, which means more zero-click results for informational searches but also potentially more varied source attribution for the sites that do get cited.

The personalization angle that matters more than rankings

There is a second-order effect here that I think the SEO community is underestimating. Faster vector search does not just help with query-to-document matching. It also makes user-specific personalization computationally feasible at scale. Google has been building Personal Intelligence features (search results that incorporate your history, preferences, and behavioral data) but these require comparing your profile vector against content vectors in real time. That has been prohibitively expensive for most queries.

TurboQuant could change that math significantly. If comparing vectors becomes near-free, personalized search results stop being a special case and become the default. For SEOs, that means the same query could surface different pages for different users based on their search history, content preferences, and engagement patterns. The notion of a single "ranking" for a keyword gets even fuzzier than it already is.

I would not panic about this. Personalized search has been slowly expanding for years. But TurboQuant could accelerate the shift from "affecting a small percentage of queries" to "affecting most queries, most of the time." If you are still reporting to clients based on incognito rank checks, that practice has an expiration date somewhere around this transition.

A research paper, not a product launch

TurboQuant is published research, not a launched product. Google publishes a lot of work that takes months or years to reach production systems, and some of it never does. So the appropriate response is not to overhaul your SEO strategy tomorrow morning.

But Haynes makes a compelling observation: previous vector search innovations, like MUVERA, influenced ranking systems within months of publication. If TurboQuant follows a similar path, its effects could start showing up in a core update by late 2026 or early 2027.

The directional bet is straightforward. Content depth and semantic relevance are going to matter more, not less. If your pages rank primarily because of domain authority and a strong backlink profile but the content itself is thin, templated, or written to satisfy a keyword checklist, those pages are increasingly at risk. Not tomorrow, but on a timeline measured in quarters.

The specific thing I would do this week: pull your top 20 pages by organic traffic. For each one, read it honestly and ask whether it is the best answer to the query it ranks for. Not the most authoritative domain. Not the best-linked page. The best actual answer. If you can name three competitor pages whose content is genuinely more useful, that page is vulnerable in a world where Google can semantically compare hundreds of candidates instead of 30.

For more context on how AI systems evaluate and cite content differently from traditional search, our earlier analysis of AI citation signals versus Google ranking factors showed a similar direction. Semantic quality is separating from traditional authority signals, and TurboQuant gives Google the infrastructure to lean further into that separation.

The algorithm nobody is watching might matter more than the next core update

The SEO community tends to fixate on core update rollouts and ranking volatility. Those matter for short-term traffic, sure. But they rarely change the underlying model of how search works. TurboQuant is the kind of development that changes the model itself. Not because compression algorithms are exciting (they are not), but because the constraint they relax, how many documents Google can deeply understand per query, has been the silent bottleneck shaping what gets ranked for over a decade.

If that bottleneck loosens, the game shifts from "get your page into the top 30 candidates through links and authority" to "be the best answer when 300 candidates are on the table." One of those games rewards traditional SEO tactics heavily. The other rewards being genuinely, substantively useful. I know which direction seems more likely, and honestly, it is the direction Google has been nudging toward for years now. TurboQuant just removes one of the technical reasons they could not fully get there.