OpenAI Ignored Robots.txt to Scrape One Publisher 12.2M Times in 90 Days

OpenAI scraped Trusted Reviews 12.2 million times in 90 days while ignoring the site's robots.txt directive, according to TollBit dashboard data published by The Media Leader. Meta hit the same site 2.8 million times, Amazon 2.4 million, Perplexity 101,000, ByteDance 95,000. The publisher received zero compensation across the five vendors despite the explicit no-crawl instruction.

That number is one publisher in one quarter. Independent Publishers Alliance board member Chris Dicker, CEO of Candr Media (which owns Trusted Reviews, Wareable, Recombu and a handful of other UK tech titles), said his estimate is that 100 of the 300 IPA member sites won't survive the next 15 months. Some of that is AI Overviews eating click-through. A meaningful chunk is the bandwidth and infrastructure cost of being scraped at industrial volume by buyers who pay nothing.

The "ad tech tax" looks generous in hindsight

Publishers have spent fifteen years complaining about the ad tech middleman cut. SSPs, DSPs, exchanges, verification, ID resolution, the whole programmatic stack takes somewhere between 30% and 50% of every dollar before it hits a publisher's revenue line. That has been the running grievance since the Lumascape became a meme.

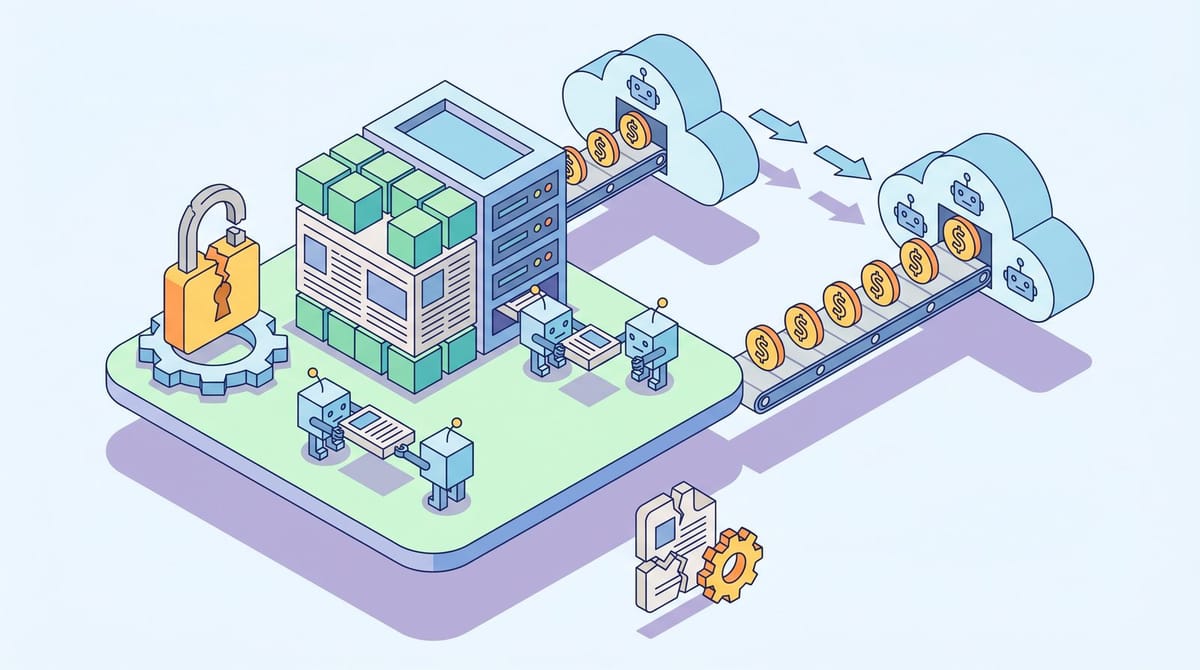

An anonymous publishing executive quoted by Digiday this week reframed the comparison in a way I think is going to stick. Ad tech vendors take a cut, sure, but they also pass revenue back. AI data brokers operate like demand-side platforms for content, except their fee is 100% and the publisher's share is zero. The middleman is now the entire transaction.

Dicker put it more bluntly. "With scrapers, the value extraction is total. They're taking 100% of the content, paying 0% and then in some cases using that content to create competing products that remove the publisher entirely." That last clause is the part that should make any marketer who depends on quality publisher inventory pay attention. It isn't just a revenue transfer. It's a content-destruction loop.

40 vendors, $1B in revenue, no royalty model

Media analyst Matthew Scott Goldstein put the scraper economy at $1 billion in 2026 revenue, citing Mordor Intelligence's market sizing. He named 21 vendors. TollBit's running index is closer to 40. The names that turned up in the Digiday reporting include Firecrawl, Exa, Tavily, Brave, You.com, Perplexity Sonar, Bright Data, and Parallel Web Systems (which the article flagged for rebranding itself as "agentic infrastructure," a phrase doing real work to obscure what it actually does).

None of these companies have a meaningful licensing relationship with the publishers they hit. Some operate stealth crawlers that don't identify themselves. Some respect robots.txt. Most don't. Digiday's data review reported AI scraper traffic grew 597% from January to December 2025, with roughly 30% of AI bot scrapes violating an explicit "disallow" directive in robots.txt.

The thing that surprised me when I dug into this isn't the volume. It's how recently the entire category came into existence. Five years ago there was no scraper layer between publisher content and large language models because there were no large language models worth scraping for. The middleman built itself in about 36 months and the publishers it disintermediated never got asked.

TollBit is the only collection model that currently exists

If there's a publisher-side answer right now, TollBit's bot paywall is roughly it. Publishers set per-page or per-query rates, AI buyers pay TollBit a transaction fee on top, and the publisher keeps 100% of the underlying license revenue. Nearly 7,000 publisher sites are on the network. About 20% of them have made money from the paywall, with payouts ranging from a few hundred dollars a month to tens of thousands depending on traffic and rate card.

TollBit reported 730% growth in bot paywall adoption between Q4 2024 and Q1 2025, and the network now sits at over 3,000 active monetizing sites including TIME and Fast Company. In March, Arc XP added TollBit as a turnkey integration, which means publishers running on the Washington Post's CMS now have a one-click path to flipping the paywall on. Akamai and Skyfire announced a parallel integration a month later targeting the CDN layer.

It seems to be working in pockets. It is not yet working at scale. The 80% of TollBit publishers who haven't earned a meaningful number from the paywall isn't a TollBit problem so much as a buyer-side problem: most scrapers still find it cheaper and easier to ignore the paywall than to pay it. The collection layer needs the buy side to participate, and right now the buy side is voting with its bandwidth.

Why this matters if you're spending a media budget, not running a publisher

The straightforward read is "publishers in trouble, not my problem." I think that's the wrong read for anyone with a brand-safe inventory line in their plan.

Two things follow from a 597% scraper traffic surge against a publisher set that's already losing AI Overview clicks. First, the long tail of independent publishers (the 100 sites Dicker thinks won't make it 15 months) is going to consolidate or disappear. The contextual targeting universe gets smaller. The brand-safe inventory marketplace gets more concentrated, which historically means CPMs go up faster than impressions go down. Second, the surviving publishers are going to either license to AI vendors directly (USA Today's parent company already books more revenue from AI licensing than from display) or move behind harder paywalls. Either path narrows the open web that programmatic depends on.

What I would actually do this quarter if I had a media budget that touches publisher inventory: ask your DSP rep for a list of the publishers in your most-used contextual or PMP packages, then cross-check that list against the TollBit and Cloudflare bot-monetization networks. Sites that are monetizing AI traffic have a path to surviving. Sites that aren't are running on the same revenue lines they were three years ago, only now with more bandwidth costs and fewer search clicks. From what I've seen, the survivor list is shorter than people assume, and a 30-minute audit will tell you which side of it your buy is sitting on.

The "Napster moment" framing keeps coming up in publisher quotes. It's not a perfect analogy. Napster eventually got replaced by a licensing model that worked because the buy side (Apple, Spotify, the labels) wanted the music to keep being made. The buy side here, at least so far, hasn't shown the same instinct. The AI vendors don't need any individual publisher to keep producing. They need the corpus to keep existing, and the corpus is mostly historical at this point.

The collection problem won't be solved by the people doing the collecting

If publishers wait for AI vendors to volunteer to pay, they'll wait forever. The TollBit-style middleware approach is the only mechanism that doesn't require goodwill, because it makes payment a precondition of access rather than a moral question. Whether enough infrastructure providers (Cloudflare, Akamai, Fastly, the Arc XPs of the world) push it to default-on is the variable that decides whether the next 24 months look like Napster (chaos, lawsuits, eventual licensing regime) or like the music industry between 2001 and 2003 (slow bleed, broken catalog, half the labels gone).

I don't have strong conviction on which way this lands. I do have conviction that the $1 billion scraper economy isn't going back into a smaller box, and that the publishers who've already enabled the paywall are probably about to look very smart in 2027.

Notice Me Senpai Editorial