Wikipedia, Reddit, and G2 Drive More AI Citations Than Your Own Pages Do

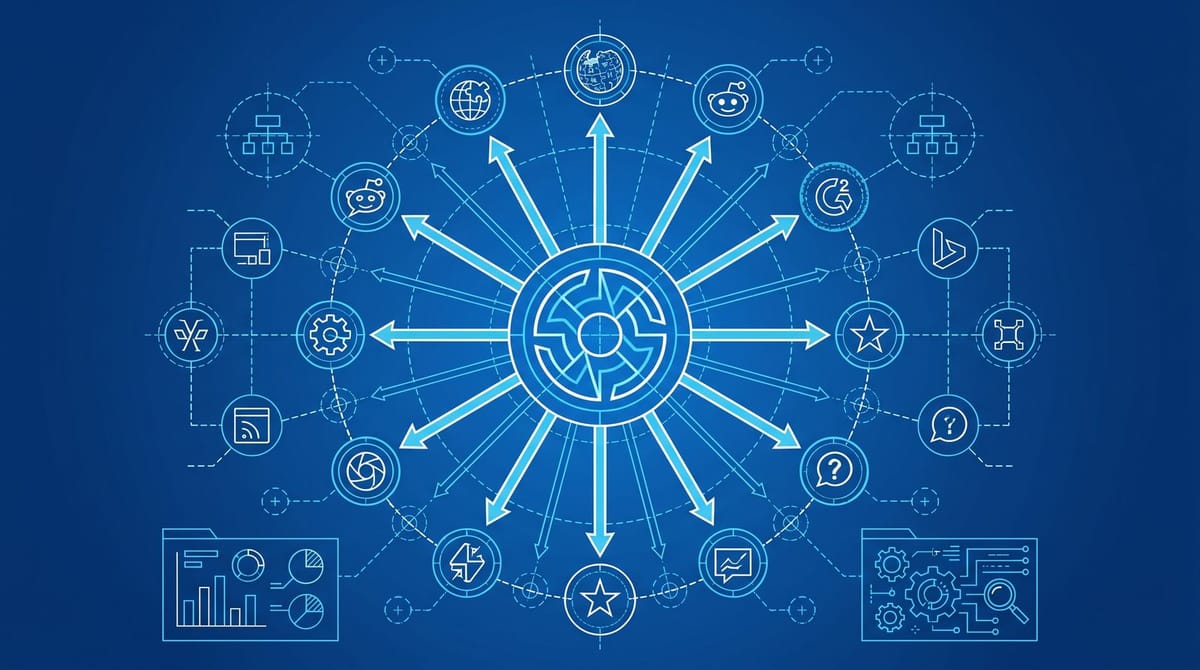

Wikipedia, Reddit, and G2 now drive more LLM citations than the brand pages they describe. Wikipedia alone appears in roughly 27% of all citations across ChatGPT, Gemini, and Perplexity, per usegrowthos.com analysis. G2 supplies about 33% of cited reviews inside ChatGPT and Google AI Overviews, and roughly 75% of those inside Perplexity. The audit unit that matters now is the top 20 third-party pages mentioning your brand, not your own site map.

The piece that prompted this read is Kevin Cotch’s breakdown in MarTech, which lays out the categories (UGC, reviews, editorial, Q&A, data aggregators) cleanly. The data underneath is sharper than the framework, and once you read the citation studies, the strategic takeaway is uncomfortable.

The math is brutal once you actually look at it

The 5W AI Platform Citation Source Index 2026 found that 15 domains account for 68% of all consolidated AI citation share. That’s a level of concentration Google PageRank never produced. It’s also why the brand-owned page you spent six months optimizing keeps losing the citation slot to a 200-word Wikipedia paragraph someone wrote in 2017.

Fifteen domains now decide 68% of what AI search says about your category.

Reddit sits at the top of that table. Across major LLMs it accounts for roughly 40% of all citations. Inside Perplexity specifically, Reddit hit 46.7% of citations, with one study showing it crossed 24% of all citations during January 2026. ChatGPT cites Reddit in over 5% of total responses, while Gemini cites Reddit in only 0.1%. That kind of platform-level variance is exactly what destroys any “general AEO strategy” deck. The same prompt, in two different LLMs, pulls from two functionally different webs.

Then the B2B layer. G2’s 2026 AI Search Insight Report found that G2 is now the #1 cited source across every major model for B2B software queries. When B2B software buyers were asked which signal makes them trust an AI chatbot’s answer, the top response wasn’t authority of the brand or recency of the data. It was a citation from a review site. Trustpilot, meanwhile, is now the fifth most cited domain on ChatGPT globally, with click-throughs from AI search up roughly fifteen-fold year over year.

Why your own pages can’t carry the citation alone

LLMs aren’t ranking your homepage against Wikipedia. They’re synthesizing across both, and reaching for the source that signals “third-party validation.” A Wikipedia paragraph wins not because it’s better written, but because it isn’t yours. From what I’ve seen, the brands losing citation share aren’t the ones with weak homepages. They’re the ones with no maintained Wikipedia page, no claimed G2 profile, and a Reddit footprint built entirely from angry support threads.

This connects to a thing we wrote about earlier this week: brand search volume now predicts AI citations better than backlinks. Both signals live off your domain. So does the third-party citation surface. You’re trying to influence two layers of someone else’s index, simultaneously, with no direct levers.

The other thing that should be sobering is volatility. A single parameter change at Google in late 2025 reportedly tumbled ChatGPT’s Reddit citation share from 60% to 10% in six weeks, with PR Newswire, Forbes, and Medium absorbing the displaced share. That’s the kind of swing that makes “we’ll just produce more thought leadership” not a strategy. It’s a narrow bet on one citation surface staying weighted the same way it is right now.

The 20-page audit, and what to actually do with it

Pick your top 10 commercial-intent prompts. The kind real buyers type into ChatGPT or Perplexity when they’re scoping a vendor. For each prompt, run it through the platforms that matter to your category. B2B SaaS: ChatGPT, Perplexity, and Google AI Overviews. Consumer: add Gemini. Capture every cited URL.

Dedupe to a list of unique pages. You’ll usually land somewhere between 15 and 25. If you don’t have an AEO tracking tool yet, Profound and Otterly are the two priced options right now, and the cheaper one starts at $29 a month, which is enough to do this manually without losing a weekend.

For each cited page, classify it into one of five buckets: Wikipedia/Wikidata, Reddit/Quora, G2/Trustpilot/Capterra, editorial coverage, or owned. Then ask three questions per page:

1. Does this page describe my brand correctly? You’d be surprised how often the honest answer is “almost.”

2. Can I edit it directly? (Reddit yes, Wikipedia maybe, G2 yes via vendor profile, editorial no.)

3. If I can’t edit it, what’s the fastest correction path?

For Wikipedia, that’s a talk-page edit request with cited third-party sources. Editors hate primary sources, so a press release from your own newsroom won’t cut it. For G2 and Capterra, it’s claiming the listing, then pushing a structured review request to your top 30 accounts. The G2 acquisition of Capterra, Software Advice, and GetApp is closing in Q1 2026 and estimated to grow G2’s citation share by another 76% in BOFU prompts. The cleanest move is consolidating review velocity on G2 first, since the long tail of Capterra/Software Advice will inherit your G2 reputation post-merger anyway.

For Reddit, it’s actually showing up under your real handle and answering questions in the subs your buyer reads. /r/SaaS, /r/marketing, /r/PPC, /r/sysadmin, whichever applies. Sponsored AMAs don’t beat staff comments answering specific questions for free. AI models seem to weight comment density and account age over upvote count, which is roughly the inverse of what most brand teams optimize for.

One thing not to do

Don’t try to manipulate Wikipedia. Don’t pay agencies that promise “Wikipedia placement.” The page will get nuked, the editor community will flag your domain in their watchlists, and you’ll be effectively blacklisted from one of the highest-leverage citation surfaces in the AI stack. The right play is providing third-party sources that an editor can cite during the next routine cleanup pass: industry coverage, real case studies, named research. Stuff a volunteer editor can verify in five minutes without calling your PR firm.

The harder honest story is that this work isn’t cleanly measurable. You can’t A/B test a Wikipedia paragraph. You can monitor citation share month over month using AEO tools, but the feedback loop is slow and noisy, and a Google parameter change can erase the gain overnight. From what I’ve seen so far, teams that are taking AI search seriously are now budgeting roughly 20% of their content/SEO line for off-domain work that has zero direct attribution. That sounds painful. It’s also probably the right number, given the data.

Three things to ship this week

Audit your Wikipedia page, or its absence. Pull your top five G2 competitors’ review counts and timestamps. Run 10 commercial prompts through ChatGPT and Perplexity and write down which third-party domains keep appearing. Together that’s about four hours of work. The output is a list that tells you where your AI search visibility actually lives, which is almost never where your owned-content strategy assumes it lives.

If you’re optimizing your own site for “answer engine readiness” before doing any of this, you’re polishing a wing on a plane that isn’t flying yet. The citation engine sits outside your domain. Most of the work probably does too.

By Notice Me Senpai Editorial