AI Citation Signals Are Nothing Like Google Ranking Factors. Here Is the Data.

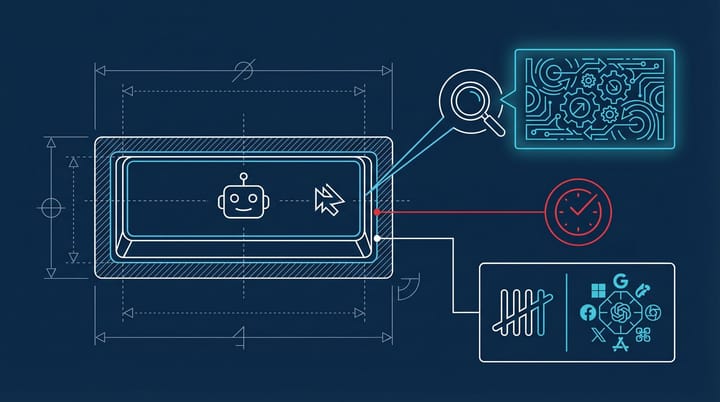

Most SEO teams are running their Google playbook on AI systems and wondering why it's not translating. I get it. For twenty years, the optimization logic was roughly the same: figure out what the algorithm rewards, do more of that. But a new analysis of 98,000+ citation rows across seven verticals from roughly 1.2 million ChatGPT responses, published by Kevin Indig via Gauge, suggests the signals AI models use to decide what to cite look almost nothing like what Google rewards. And some of the findings are, honestly, kind of uncomfortable.

The uncomfortable part isn't that AI works differently. Everyone expected that. It's that several things we've been taught are "best practices" in SEO actively suppress AI citations. Hedging language, pricing pages, even mentioning well-known brands. If you've spent the last decade building content around those signals, this data says you've been optimizing in the wrong direction for a channel that's growing fast.

Declarative sentences win. Hedging loses.

The single clearest signal across all seven verticals: declarative language gets cited more. Opening with "[X] is [Y]" patterns, direct claims, no preamble. The aggregate lift is +14% over pages that open with questions or qualifications.

This part surprised me, because it runs directly against how most of us were trained to write web content. The conventional advice has always been to lead with the question your reader is asking, mirror their uncertainty, build trust through qualification. "You might be wondering..." or "Many marketers struggle with..." That whole approach.

AI systems seem to treat hedging language as a negative signal. "This may help teams understand" gets cited less than "Teams that do X see Y." From what I've seen working on content strategy for a few SaaS sites, this tracks with how large language models process authority. They're pattern-matching for confidence, not for empathy. The page that states a fact plainly reads as more authoritative to the model than the page that carefully hedges around the same fact.

The practical implication is pretty stark. If you're writing content that you want AI systems to cite, you probably need to restructure your intros. Not all of them, not immediately. But pull up your top 20 pages by organic traffic and look at how many open with a question or a hedge. My guess is it's most of them. Rewriting those intros to lead with a clear declarative statement is a quick test worth running.

Your heading structure is probably in the dead zone

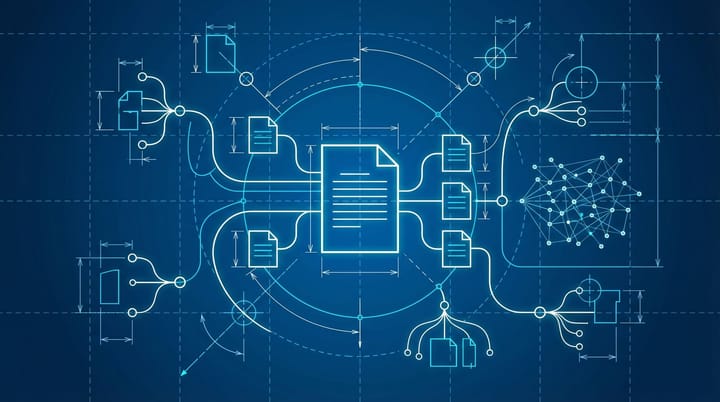

This is the finding I keep coming back to. The data shows a "3-4 heading dead zone" that suppresses AI citations across every vertical studied. Pages with 3-4 headings performed worse than pages with either fewer headings or more. Partial structure, apparently, confuses the model more than no structure at all.

The problem is that 3-4 headings is exactly what a typical 1,000-1,500 word blog post has. One intro, three or four sections, a conclusion. It's the default template for probably 80% of B2B content on the internet right now. And it's sitting in the worst possible range for AI citation.

The optimal ranges vary wildly by vertical, which makes this harder to act on. Indig's data shows Crypto content performs best with 5-9 headings (34.7% of high-cited pages), Finance peaks at 10-19 headings (29.4%), CRM and SaaS content rewards 20-49+ headings (12.7-18.2%), and Healthcare is bimodal, performing best with either zero headings or 5-9 max.

On a site I consulted for last year in the SaaS space, we tested restructuring a batch of pillar pages from the standard 4-heading format to a more granular 15-20 heading structure. Basically turning each section into multiple sub-sections with their own H2s and H3s. The pages were more scannable for humans too, which was a nice side effect, but the real goal was to see if AI tools would pick them up more often. It's early, but the direction seems to match what this data is showing. More structure, not less. At least for that vertical.

Pricing language is a citation killer (except in finance)

This one is counterintuitive enough that I want to be careful not to overstate it. The data shows pricing language correlates with a -0.84x citation rate across verticals. In five of six verticals studied, pages that mention pricing get cited less by AI systems. Finance is the sole exception, where pricing language actually helps (1.16x lift).

I think there are a few possible explanations. AI models may associate pricing language with commercial intent pages that are more likely to be promotional than informational. Or pricing creates a specificity problem. Prices change, and models might have learned that price-containing pages go stale faster. Whatever the mechanism, the signal is consistent enough to be worth paying attention to.

If I were optimizing a product page or a comparison post for AI visibility, I'd probably test separating the pricing details onto a dedicated pricing page and keeping the feature or comparison content clean of dollar amounts. That's a structural change, not a content quality change, but this data suggests it matters.

Famous brands hurt. Niche expertise helps.

Here's where it gets weird. Knowledge Graph entities, meaning well-known brands and concepts that Google's Knowledge Graph recognizes, show a negative correlation with AI citations. High-cited pages average 1.42 Knowledge Graph entities per page. Low-cited pages average 1.75. More household names, fewer citations.

On paper, that sounds backwards. Google has spent years rewarding entity-rich content. Mentioning known brands, people, and concepts helped pages signal topical authority to Google's algorithms. AI systems seem to work the other way around. Niche-specific entities, the kind of terminology and references that only practitioners in a field would use, outperform generic brand mentions.

This connects to something I've been noticing anecdotally for a while now. The content that AI tools tend to surface in my own testing tends to be more specialized, more practitioner-focused, than what Google surfaces. Google rewards broad authority. AI rewards depth. That's a rough generalization and I'm sure there are exceptions, but this data gives me numbers to put behind the feeling. And it aligns with what we've been seeing in how marketers are shifting spend toward experimental channels as traditional signals become less reliable.

Date stamps matter more than you'd think

Pages with date entities get cited at 1.42x the rate of pages without them. That's across nearly every vertical, with Finance being the lone exception (0.65x, possibly because financial content dates poorly and models have learned to be skeptical of dated financial claims).

This is useful because it's easy to act on. If your content doesn't include explicit dates, "as of March 2026" or "updated Q1 2026" or similar, you're leaving citation potential on the table. It's not a dramatic structural change. It's a formatting habit. And the data suggests AI models use date presence as a freshness and specificity signal, similar to how recency indicators in email metrics separate signal from noise.

Numeric specificity in general shows a positive correlation. The strongest signals appear in Product Analytics (+1.10x) and Finance (+0.98x). Specific numbers, specific dates, specific benchmarks. The pattern is consistent: concrete beats abstract.

Reddit's SEO dominance hasn't crossed over to AI

One more finding that's worth mentioning because it contradicts a popular assumption. User-generated content represents only 5.3% of AI citations, while corporate content accounts for 94.7%. Even Crypto, which has the highest UGC citation rate at 9.2%, is overwhelmingly dominated by institutional sources.

Indig puts it plainly: "The Reddit effect in SEO has not translated proportionally to AI citations." Reddit has been eating Google search results for the last two years. But AI models citing content from their training data and retrieval pipelines are pulling from traditional publishers, official documentation, and corporate content at a rate that makes UGC almost irrelevant.

For anyone who has been worried that Reddit's SEO rise meant traditional content was becoming less valuable, this is a meaningful counterpoint. The content types that work for Google discovery and AI citation are diverging, and owned corporate content is holding up better in the AI channel than most people expected.

Restructuring for a system that doesn't rank the way Google does

The temptation with data like this is to create a checklist and start optimizing everything. I'd push back on that instinct a little. These correlations are drawn from one (very large) dataset of ChatGPT responses. Other AI systems may weight signals differently. And correlation across 98,000 citation rows is strong evidence, but it's not the same as a confirmed ranking factor.

What I'd actually do with this, if I were running content for a B2B site right now: pick your top 10 pages by traffic, audit them against three signals. Do they open with declarative statements or hedging? Are they in the 3-4 heading dead zone? Do they include date entities? Those three checks take maybe an hour. Fix what you find and watch citation rates over the next 60-90 days.

The bigger strategic question is whether you should be optimizing for AI citation at all yet, or whether Google still represents enough of your traffic to stay focused there. I think for most sites it's both, and the good news in this data is that several of the AI-positive signals (specific numbers, date stamps, clear structure) aren't going to hurt your Google performance either. The real tension is in hedging language and entity strategy, where Google and AI seem to want genuinely different things.

Honestly, the thing that sticks with me most is the heading dead zone. Not because it's the most impactful signal, but because it means the default blog post template that everyone uses is probably the worst structure for AI citation. And nobody is going to want to hear that, because restructuring 500 blog posts sounds terrible. But the data is the data.

By Notice Me Senpai Editorial