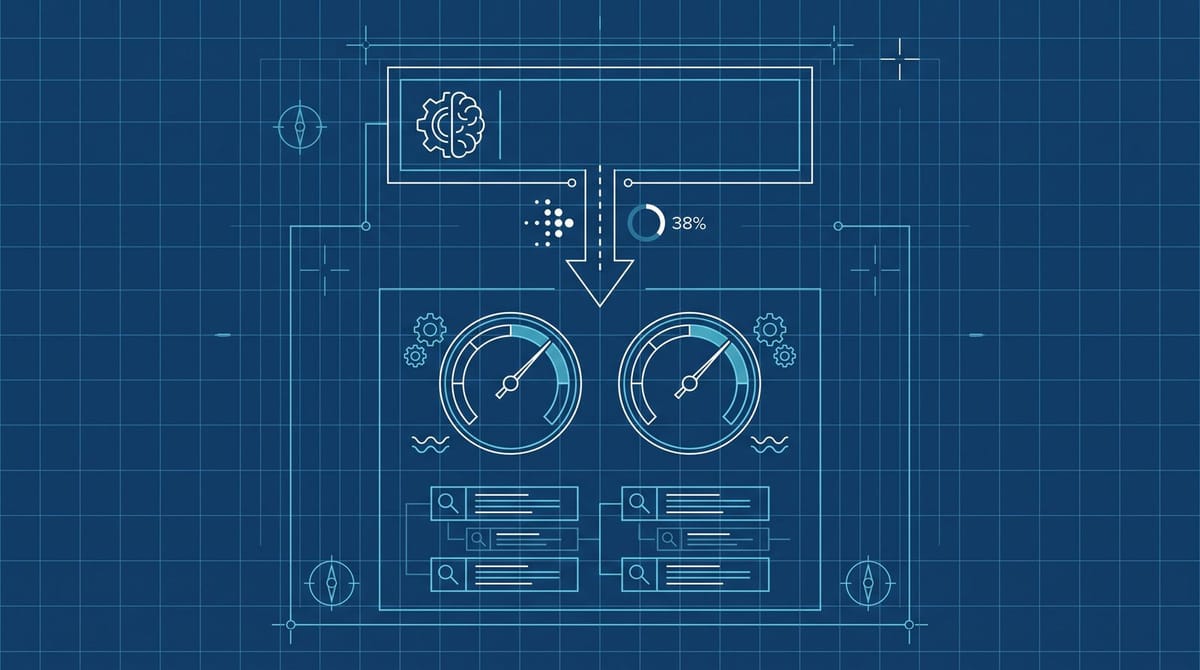

The First Randomized AI Overviews Study Pinned the Click Loss at 38%

Saharsh Agarwal (Indian School of Business) and Ananya Sen (Carnegie Mellon) ran the first randomized field experiment on Google's AI Overviews, recruiting 1,065 US desktop Chrome users between January and February 2026. Hiding AI Overviews raised outbound clicks from 0.38 to 0.61 per search, a 38% drop on triggered queries. Survey responses on satisfaction and information quality were nearly identical between the conditions.

The full draft was posted to SSRN this month, still pre-peer-review, but pre-registered with the AEA RCT Registry before any data was collected. Search Engine Journal's writeup is what surfaced it for most marketers this week, and it lays out the design and headline numbers in full.

Why this study lands harder than the previous data

Pew's March 2025 study, which tracked 68,879 actual searches across 900 KnowledgePanel adults, found a 47% click drop on AIO-triggered queries. Ahrefs documented a 58% CTR reduction for top-ranking pages by February 2026. The 38% number from this new paper is actually the smallest click-loss figure in the recent stack, not the largest.

What makes this one different is the design. Pew is observational. Ahrefs is observational. Both can be picked apart on the grounds that AIO is correlated with the kind of query that already had a low click rate to begin with. Google's standard rebuttal to every previous click-loss study has been some flavor of "you're confusing correlation with causation."

This study removes that argument. A randomized field experiment with a Chrome extension that strips AIO out of the SERP for the treatment group means the only difference between groups is the AI Overview itself. The 38% number is causal, not correlational. From what I've seen, that's the first time anyone has been able to pin a number to AIO with that kind of methodological cleanliness.

The other meaningful detail: zero-click searches went from 54% to 72% when AIO appeared. That is roughly a 33% rise in zero-click sessions on triggered queries. AIO appeared on 42% of all queries during the study window, which is more than double Pew's 18% trigger rate from a year earlier. The trigger rate is what publishers should actually be tracking, not just the click-loss percentage.

What the satisfaction data actually says (and what it doesn't)

This is the part most coverage glosses over.

The study didn't find that users prefer AI Overviews. It found that users with AIO removed reported "nearly identical" levels of satisfaction, information quality, and ease of finding information. Over 95% of the Hide-AIO group didn't even notice that the extension was modifying their results.

The implication cuts both ways for SEOs.

For Google, it weakens any argument that AIO is meeting a clear unmet user need. If users can't tell when it's there, the "value to users" narrative gets harder to sell to regulators, advertisers, and publishers asking why their organic traffic vanished.

For publishers, it removes the "give it time, users will come back" thesis. Users aren't returning to source pages because they got their answer in the AIO and were satisfied. They didn't have a quality complaint to begin with. The click is gone for the same reason snippet answers killed the click for "what time is it in Tokyo" queries a decade ago. There is no behavioral wave coming back to bail anyone out.

Where this leaves Google's "bounce clicks" defense

For most of the past year, Google's public position has been that AIO is removing low-value "bounce clicks" (visits where someone clicks a result, grabs a fact, and leaves). Liz Reid repeated that defense most recently to Search Engine Land, and Google itself has leaned on the same explanation in response to the rising click-loss data. We covered the missing supporting data when she ran the line publicly the last time.

The bounce-clicks framing assumes that the clicks AIO removes are clicks that wouldn't have produced engaged sessions anyway. The Agarwal and Sen study doesn't measure session depth or dwell time directly, but two pieces of its data sit awkwardly next to the bounce thesis.

First, outbound click volume per search nearly doubled (0.38 to 0.61) when AIO was removed. Bounce clicks should have a low ceiling, but a 60% increase in click volume implies a much larger pool of suppressed clicks than just the bouncers.

Second, sponsored click volume didn't change between conditions. If AIO were specifically suppressing low-quality, low-intent clicks, you'd expect a parallel effect on the ads in those SERPs, since ads sit above the fold for most commercial queries. The fact that paid clicks held steady while organic dropped 38% suggests the suppression isn't really about query intent quality. It's about real estate and answer placement.

I think the bounce-clicks talking point survives the year. But it survives as a talking point, not as a data position. The next regulator or antitrust filing that cites Agarwal and Sen will be hard to wave off with a Google blog post that has no numbers in it.

The audit that answers the question for your own site

The headline 38% doesn't tell you what's happening on your specific URLs. Here's a 20-minute version of the audit that does.

Step 1: Pull GSC queries from the last 90 days, segmented by AIO trigger. Use the SERP-feature filter in Search Console (or a third-party tool like Ahrefs or AlsoAsked that flags AIO presence). Sort by impression volume. The 50 highest-impression queries that trigger AIO are your actual exposure surface.

Step 2: For each, measure CTR delta against the same query 12 months ago. If you don't have a 12-month historical comparison, use a non-AIO query of similar intent and length as a control. The Agarwal and Sen 38% is the population mean. Yours could land at 10% or 70%, depending on category. The Sistrix category breakdown we wrote up earlier shows the spread.

Step 3: Sort the worst-affected queries by revenue per session, not by traffic. A lot of teams optimize the wrong queries here. A query that lost 60% of its clicks but generates $2 sessions is less urgent than a query that lost 25% but generates $80 sessions. Recovery work should follow the revenue, not the percentage drop.

Step 4: For the top revenue-loss queries, write the answer the AIO is summarizing. Not the page Google is currently lifting from. The actual answer, in two to three quotable sentences, placed in the first 80 words of the page. The same study found AIO sits in the top SERP position 85% of the time. If you want the citation back, you need to be the source it pulls from. That means writing the page like you're trying to win the snippet itself.

This isn't 2023's "answer-engine optimization" advice. It's narrower. AIO doesn't reward general topical authority the way classic ranking did. It rewards a quotable, declarative passage on the exact question being asked. From what I've seen in the wild, the pages winning AIO citations now read more like Stack Overflow answers than blog intros.

There's still a click problem after all of that. Even cited sources only get a click 1% of the time, per Pew. But 1% of AIO-appeared traffic is materially better than 0%, and the gap between cited and uncited compounds across thousands of queries.

Honestly, I don't think the long-term answer for publishers is to win the AIO citation. The economics don't work at 1% click rates. The long-term answer is the unsexy one: more direct, more email, more first-party audience, and less dependence on a SERP that is actively reducing your share of it. The Agarwal and Sen number just makes the math on that decision a lot more obvious.

Notice Me Senpai Editorial