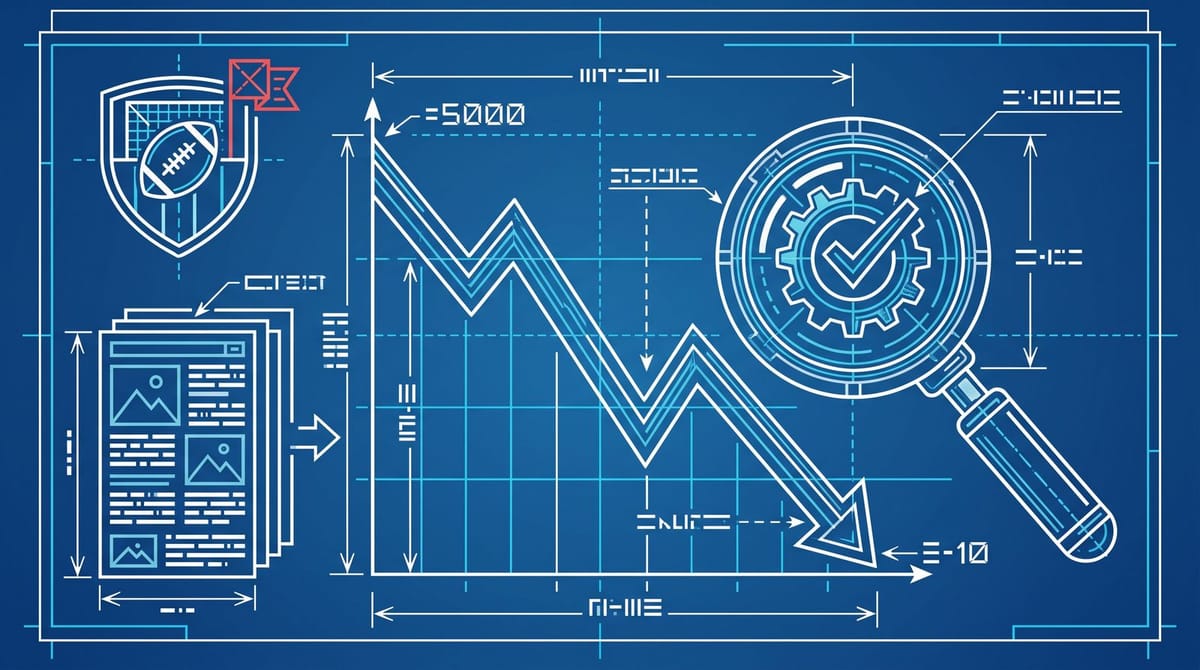

An r/SEO Sports Site Lost 99.8% of Its Impressions Following AI SEO Advice

An r/SEO operator posted this week that one of their sports sites dropped from roughly 5,000 daily Google impressions to about 10, a 99.8% collapse, after following standard AI SEO advice. The audit pattern they described maps cleanly onto Google's scaled content abuse policy: high-volume AI-written pages, templated programmatic structures, and almost no editorial review. Average recovery time on sites that get flagged is around six months.

That number is doing a specific job. It's not an influencer prediction. It's not a vendor's case study with a logo on it. It's a working operator posting a chart of their own site falling off a cliff, with enough detail in the comments to reverse-engineer what they actually did. I think that's worth more than most of what's currently being written about AI SEO.

What "AI SEO advice" actually meant in this case

The pattern looks like this. Pick a high-traffic sports vertical (in the OP's case, betting tips and stats pages broken out by team and league). Generate hundreds of programmatic pages using an AI assistant. Layer in some FAQ schema, a few "related searches" widgets, and ship.

If you've been on r/SEO this year, you've seen a hundred LinkedIn carousels recommending almost exactly this stack. The OP wasn't doing something exotic. They were doing what most of the AI SEO advice circulating in 2026 explicitly tells operators to do. The threads selling AI SEO courses don't usually print the part where Google's spam team comes around eight weeks later.

Google's documentation is more direct about this than most operators seem to register. Their spam policies page names "using generative AI tools or other similar tools to generate many pages without adding value for users" as a textbook example of scaled content abuse. The policy went into force in May 2024 alongside the March 2024 core update, and enforcement has only sharpened since.

The phrase that matters in there is "without adding value." Google's separate guidance on AI content says they don't penalize AI itself, which is true in roughly the same way "we don't penalize email, we penalize spam" is true. Operationally, the difference between AI content that ranks and AI content that gets flagged is whether a human added something Google can't already scrape from the rest of the web. Most of the AI SEO advice on Twitter is silent on that part.

Why this single case study matters more than another AI content opinion piece

Aggregate numbers exist. A breakdown of March 2026 scaled content abuse penalties put the typical traffic loss between 50% and 80%, with niche information sites publishing 500+ AI pages taking 60% to 80% drops. Affiliate review sites came in at 40% to 70%. News aggregators sat between 50% and 75%.

This case is more useful than any of those buckets because it's a single operator showing the actual mechanism. They followed the playbook, they kept the chart, and they posted it without sanding off the embarrassment. That's the kind of ground truth Search Engine Land's measurement piece keeps gesturing at: the part where buying impressions back through ads doesn't help, because the issue isn't visibility, it's whether Google trusts your domain to surface at all.

From what I've seen, the operators who survived the worst of this update weren't necessarily smarter or better-resourced. They had something cheap and unglamorous in their workflow: one human pass per page that wasn't just spell-check. That single edit, the thing AI SEO advice keeps treating as optional, seems to be roughly the line between a 5% traffic dip and a 99.8% one.

A 30-minute audit before your next AI content sprint

If you've published more than 50 AI-assisted pages in the last six months, run this before you ship the next batch. It's not exhaustive. It catches the specific patterns that triggered the recent wave of penalties.

Pull 10 random pages and count how many sentences are paraphrased from the top 5 Google results for the same query. If more than half are restatements, you don't have content, you have a synonym pass. Google's classifier seems to identify that faster than most operators expect. The fix isn't rewriting harder. It's adding something that didn't exist anywhere else on the web before you published: a number, an interview quote, a screenshot, an original test.

Check your publish velocity. Sustained output above 10 articles per day is, in practice, a strong "automated content" signal regardless of whether you actually used AI. If you can't realistically explain how a team your size produced 300 long-form articles last month, neither can Google.

Look at author bios. The breakdown of penalized sites in March showed that 73% of them had no author attached to the content. No name, no credentials, no LinkedIn link. Adding bios after the fact won't recover a flagged site, but the absence of them on a site that's still ranking is the first thing I'd fix on a Tuesday morning.

Pull a Search Console export from before and after every major content sprint. The dashboard view smooths drops. A query-level export shows the specific clusters that died. In most cases I've seen, the collapse is concentrated in 20 to 50 templated patterns. Killing those URLs (302 first, then 410 if you want to nuke them properly) and consolidating the value into a smaller number of edited pages is the recovery pattern that actually works. Improving each thin page individually almost never does.

The recovery problem nobody's pricing in

Six months. That's the average for a flagged site to claw back any meaningful share of its previous traffic. For a small operator, six months of organic at zero, while the AdSense or affiliate revenue resets to near nothing, is often enough to fold the project entirely. The "Google penalties are recoverable" framing undersells how brutal that timeline is for anyone running on a shoestring.

And honestly, recovery isn't a guarantee. Sites that were essentially built on the practice (where the AI pages make up 80%+ of the URL inventory) often don't recover at all. The accepted playbook is to consolidate aggressively, kill most of the thin URLs, rebuild authority around a much smaller number of resources, and accept that the previous traffic ceiling is gone.

That last part is the one nobody on LinkedIn wants to mention. If you got to 5,000 daily impressions on the back of 1,500 AI pages, the version of your site that survives the audit looks like 80 to 100 actually-edited pages, and it caps lower than where you were. It's a real business in a way the first one wasn't, but it's a smaller one.

There's a related dynamic worth keeping in mind: the small operators who are quietly winning right now aren't running away from AI. They're using AI to build tools and calculators that earn links and citations, then using AI again only to draft supporting content that an editor heavily rewrites. Two uses of AI per page, both invisible from the outside. The operators getting destroyed are using it once, visibly, and skipping the second pass.

What the r/SEO chart actually proves

The 99.8% number is the receipt for an argument the SEO industry has been having since the policy launched. It's not whether AI content "works." It's whether the advice circulating in the AI SEO ecosystem reflects what Google is actually penalizing, or whether it reflects what the people selling courses need to be true.

From this single chart, I'd say the gap between those two is wider than most operators are pricing in, and probably worth one boring Tuesday afternoon of audit before reading another Twitter thread about a 14-day programmatic SEO sprint.

Notice Me Senpai Editorial