Aiso's 90-Prompt Test Found ChatGPT Fans Out Commercial Queries 25x Harder

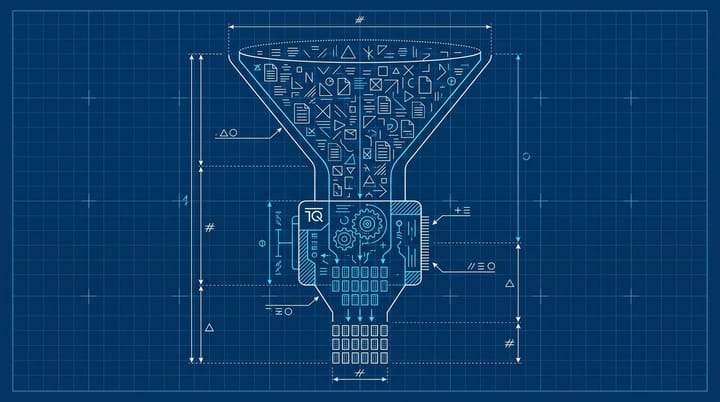

ChatGPT triggered web searches on 78.3% of commercial prompts and just 3.1% of informational ones in a 90-prompt study from Aiso published April 30, 2026. That roughly 25x gap means the pages winning ChatGPT citations are comparison articles, best-of shortlists, and "X vs Y" guides, not the long authority piece. The roadmap fix takes about an afternoon per topic cluster.

Where the 25x gap actually came from

The Aiso analysis, run by CEO Ben Tannenbaum, classified 90 prompts by intent across beauty, legaltech, and IT. Out of 23 commercial prompts, 18 triggered ChatGPT's fan-out behavior, where the model expands one user query into multiple background searches. Out of 65 informational prompts, only 2 did. The fan-outs themselves leaned heavily commercial: 39 of the 42 expanded queries (92.9%) carried commercial intent.

That is a small sample, and Tannenbaum says so directly. But the directional point is hard to argue with. ChatGPT goes hunting on the open web when it senses a buying decision, and it mostly stays put when it senses a definition request. If you have watched your "what is X" guides quietly stop converting in 2026 while your "best X for Y" pages keep showing up in chat sessions, that is the mechanism.

It also matches what most teams I talk to are already seeing in Search Console exports. Informational impressions are getting absorbed into the answer itself. Commercial impressions are getting routed to a citation that looks like a shortlist.

Indig's 18,012 citations layered on top

Kevin Indig's earlier study at Growth Memo, also covered by Search Engine Land, parsed 1.2 million AI answers and 18,012 verified citations to map the structural picture inside cited pages. Four numbers actually move the needle:

- 44.2% of citations come from the first 30% of a page. The middle and bottom together split the remaining 56%.

- Heavily cited text averages 20.6% proper nouns. Standard English text averages 5 to 8%. Specific tools, brands, and named people anchor the answer and reduce ambiguity for the model.

- Pages with headlines that directly answer the query (cosine similarity 0.90 or higher) get cited 41% of the time. Loosely related headlines drop to 29%.

- Cited content was roughly 2x more likely to include a question mark, with 78.4% of question-related citations appearing inside H2 and H3 headings rather than body copy.

Stack the two studies and the practical content brief writes itself. ChatGPT fan-outs are mostly commercial. Commercial fan-outs find pages that match the query string in the H1, lead with named entities, and answer the comparison in the first third of the body. The "comprehensive guide" structure that won SEO traffic in 2018 actively underperforms here, because the answer the model wants is buried below the introduction, the table of contents, and the throat-clearing.

The other quiet finding from the Indig data: high-performing pages averaged a Flesch-Kincaid grade level of 16, versus 19.1 for lower-performing ones. Slightly simpler prose, not dumber prose. Business-grade clarity beats academic register.

The honest limitation, which matters

I want to hedge this carefully because the temptation is to overcorrect.

The Aiso sample is 90 prompts in three industries. Indig's sample is much larger, but the analysis is observational. Neither one isolates causation, and both authors say so in the writeups. What holds up across both is the directional shape: commercial intent triggers fan-out, fan-out lands on shortlist-style pages, those pages share a few structural traits.

What does not hold up is the exact citation lift number for any single format. If somebody quotes you a "comparison pages get 25.7% more citations" stat, ask which study, which industry, which prompt set. Most of those numbers come from observational analyses with thin error bars. From what I have seen, treating them as priorities rather than guarantees is the right read. The structural advice is robust enough to act on. The exact lift numbers are not.

Where the 30-minute opportunity lives

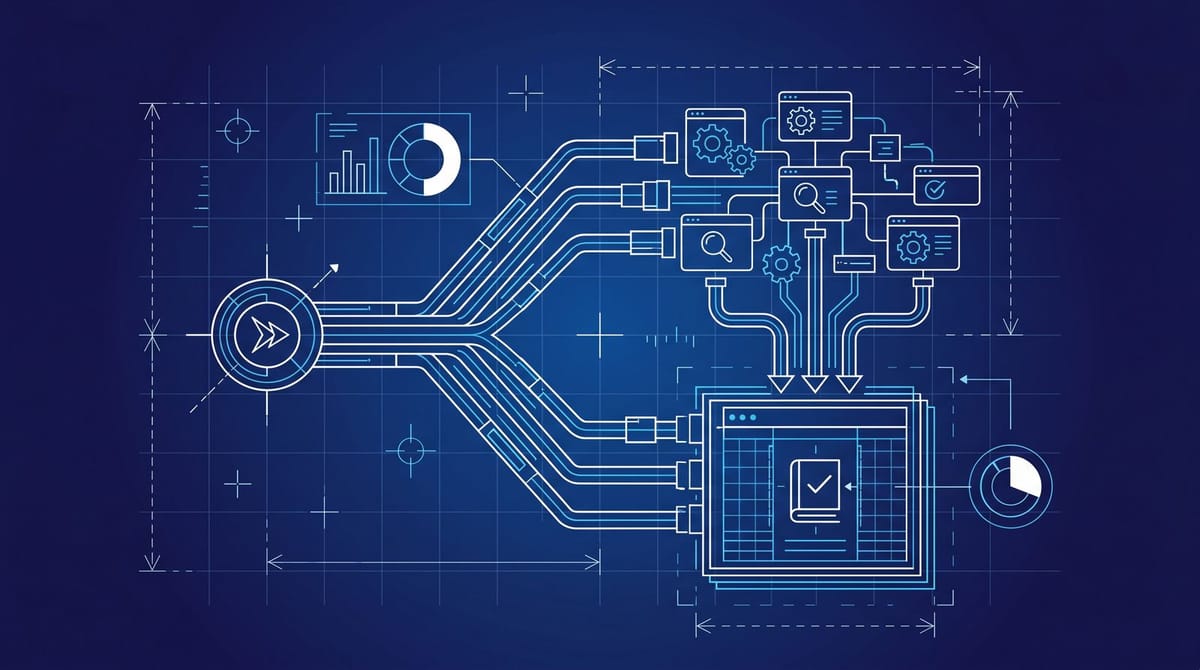

Pull your last 60 days of Search Console data and tag each URL by intent. "What is" pages, definition guides, and explainers go in one bucket. Comparison pages, "best X for Y" pages, alternatives pages, and shortlist pages go in another.

Then run a quick gap audit. Most marketing teams I have talked to in the last quarter have this mix flipped 70/30 toward informational. Aiso's data suggests ChatGPT's fan-out behavior is doing the inverse, so the inventory is misaligned with where citations actually live.

The shortest path to a citation surface is converting your three highest-trafficked authority guides into companion comparison pages. Not replacing them, adding to them. A tightly written "[Tool A] vs [Tool B] for [Use Case]" page that lives at the same depth in your IA, with a sub-1000-word body, an H1 that exactly matches the comparison query, an entity-rich opening paragraph, and a clean comparison table near the top of the page.

The opening paragraph deserves real attention. Indig's data suggests roughly 44% of all citation lift sits in the first third of the page, and the model is looking for definitions, not for hooks. Lead with the answer, name the entities, label the metric type, and put the question mark in the H2 that introduces the comparison. The marketing instinct to open with a story is the wrong instinct for this surface, even if it still works on Google.

While you are at it, kill any lazy phrasing like "many tools offer this feature." That kind of vague framing is exactly what gets ignored. Indig's data showed cited passages used clear "X is" or "X refers to" framing nearly twice as often as the rest. Definitive language wins, even at the cost of sounding slightly blunter than you would normally write.

That last part is the part most teams skip. We covered the SE Ranking experiment where a fabricated brand earned 32x the citations because the surrounding content was structurally optimized. The structure does the heavy lifting. Authority follows the structure, not the other way around.

What I would expect to see in 90 days

If a midsized B2B team rebuilt the top 15 commercial topics into comparison pages with the structural traits above, I would expect ChatGPT citation volume to roughly double inside a quarter. That is a guess, but it is the kind of guess I would put real money behind right now because the input cost is low. The brief is small, the page is short, and the H1 is dictated by the query.

I would also expect Search Console clicks to fall slightly on those URLs while overall branded query volume rises. That is the pattern we saw with the randomized AI Overviews study earlier this year. The conversion path moves from a click to an answer mention, and the reporting layer has to adjust to count both.

And to be fair, this is not entirely new. Comparison pages have always converted at higher rates than authority guides. ChatGPT's fan-out behavior is just amplifying a preference that already existed in the funnel, and rewarding it with citation share instead of click share.

Most teams I talk to are still shipping authority guides into a system that increasingly cites comparison pages. The math is not subtle, and the gap is wide enough that even a rough first attempt at restructuring will move citation share inside the quarter.

By Notice Me Senpai Editorial