Google Turned Search Into a Task Engine (and Your SEO Playbook Is Obsolete)

Sundar Pichai went on the Cheeky Pint podcast recently and described a version of Google Search that doesn’t return links. His exact words: “A lot of what are just information-seeking queries will be agentic in Search. You’ll be completing tasks. You’ll have many threads running.”

That was not a roadmap tease. It’s already live.

Google’s AI Mode now books restaurant tables across eight countries. You describe your group size, preferred cuisine, and time. The AI scans multiple booking platforms simultaneously, finds real-time availability, and confirms the reservation. No links served. No website visited, at least not by you.

If your SEO strategy assumes that ranking well means getting clicks, this is the part where the assumption breaks.

From “Here Are 10 Links” to “I Booked the Table”

Google’s agentic search isn’t a concept deck anymore. The restaurant booking feature rolled out globally on April 10, working with TheFork, Sevenrooms, Resdiary, DesignMyNight, Mozrest, Foodhub, and Dojo across Australia, Canada, Hong Kong, India, New Zealand, Singapore, South Africa, and the UK.

And restaurants are just the proof of concept. Google has already announced that flights and hotels will follow, with users able to compare prices, browse room photos, and complete bookings entirely within AI Mode. Search becomes the interface where things get done, not the starting point where you leave to get them done somewhere else.

Pichai’s framing was pretty direct. He described Search evolving into “an agent manager in which you’re doing a lot of things... you’re getting a bunch of stuff done.” The emphasis on “bunch” feels deliberate to me. This is multi-threaded. Several agents running tasks in parallel, on your behalf, without you needing to visit a single website.

For most of the SEO industry, this raises a question nobody has a clean answer for yet: what do you optimize for when the user never sees a search results page?

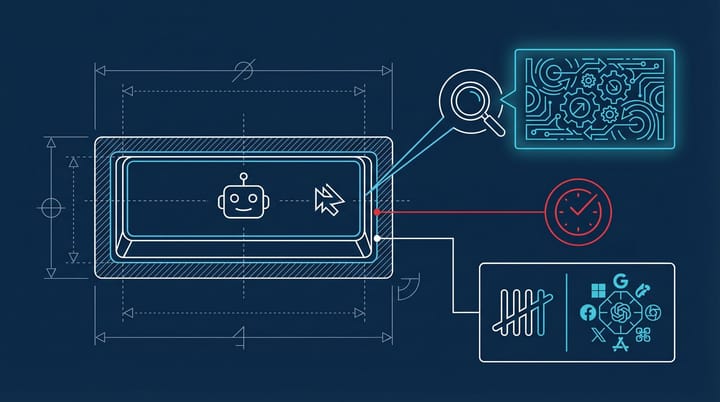

Google-Agent: The Crawler That Doesn’t Follow Your Rules

One technical detail here should genuinely concern you. On March 20, Google added a new user agent called Google-Agent to its official crawler list. Unlike Googlebot, which indexes the web autonomously in the background, Google-Agent only fires when a real user triggers it. A person asks AI Mode to book a table or find a flight, and Google-Agent goes out, browses the relevant platforms, evaluates options, and acts.

The important part: Google-Agent does not respect robots.txt.

Traditional crawlers operate within the boundaries you set. You can block Googlebot from specific directories, throttle crawl rates, hide staging environments. Google-Agent ignores all of that. It’s classified as a “user-triggered fetcher,” which means it behaves more like a browser being operated by a person than a bot following your rules.

The MarkTechPost analysis described this as defining “the technical boundary between user-triggered AI access and search crawling systems.” Two different categories of automated access, two different rule sets. And the one most websites have spent years configuring just stopped applying to a growing category of Google traffic.

If your site relies on robots.txt to control what automated systems can access, that control layer doesn’t work anymore for this type of request. The only real fallback, for now, is authentication. If you have content you don’t want AI agents reading, you need actual access controls. Polite txt file instructions won’t cut it.

Ranking Stops Mattering When the Agent Can’t Transact

I think most SEO teams are going to react to this by trying to optimize for AI Mode the way they optimized for featured snippets in 2019. Same playbook, new format. And from what I’ve seen, that’s going to be roughly as effective as it was then: partially, and only temporarily.

The deeper shift is structural. When Google’s agent handles the entire transaction, the value of ranking changes. You’re not competing for a click anymore. You’re competing to be the data source the agent pulls from when it acts on behalf of the user.

Some practical specifics:

Structured data becomes non-optional. Schema markup has always been the “nice to have” most teams never get around to implementing properly. In an agent-driven search environment, it’s the primary language the AI uses to understand what your business offers. Restaurant schema, product schema, FAQ schema. The sites without clean structured data are, in my estimation, the first ones the agent skips entirely. Google’s own documentation for AI Mode integration leans heavily on this.

Your transaction infrastructure matters more than your content. If you’re a restaurant without integration into one of Google’s booking partners (TheFork, Sevenrooms, Resdiary, DesignMyNight), you’re invisible to agentic search. The AI can’t book what it can’t connect to. For e-commerce, the implication seems similar. API-accessible inventory and real-time pricing will probably outperform beautifully written product pages that require a human to navigate them.

Agent-compatibility is the new mobile-friendliness. In 2015, Google started penalizing sites that weren’t mobile-friendly. I wouldn’t be surprised if something similar happens with agent-readiness within the next 18 months. The Google-Agent user agent string is already published with dedicated IP ranges. Smart teams should be logging those requests now to establish a baseline before volumes increase significantly.

We’ve already seen AI Overviews cut organic clicks by as much as 58% on affected queries. Agentic search takes that a step further by removing the click entirely from certain transactions. The traffic doesn’t decline. It disappears.

The robots.txt Reckoning Most Teams Haven’t Processed Yet

The fact that Google-Agent bypasses robots.txt is, honestly, a bigger deal than most coverage has acknowledged. Mike Stewart, a search marketing professional quoted in Search Engine Journal’s coverage, asked the question that should keep SEO leads up at night: “What sources does the agent trust? Where does your business show up in that decision layer?”

Robots.txt was never designed for a world where AI agents browse your site on behalf of users. It was a gentleman’s agreement between webmasters and crawlers that worked because everyone agreed to play by the same rules. Google-Agent treats it as irrelevant because, from Google’s perspective, it’s acting as a user’s browser, not a search engine crawler. Fair enough, I suppose. But also kind of a lot to absorb if you’ve spent years building access policies around that file.

For sites with sensitive or proprietary content, this means authentication layers are the only reliable control mechanism. For everyone else, it means your site needs to work well for an audience that now includes both humans and autonomous agents. And those two audiences want somewhat different things from you.

Start With Your Server Logs and Your Schema Markup

Check your server logs for the Google-Agent user agent string. Google published the IP ranges on March 20. If you’re already seeing requests, you’re part of this rollout. If you’re not seeing them yet, you will be.

Then audit your structured data. Run your key pages through Google’s Rich Results Test. Every page that returns errors or warnings is a page the agent will likely skip when it’s trying to act on a user’s behalf. I’d focus on product pages and location pages first, since those are the categories Google is targeting with agentic features right now.

From what I’ve seen, the sites that adapted early to featured snippets, to mobile-first indexing, to AI Overviews, tend to be the same sites that treat each new search interface as a data problem rather than a content problem. That pattern seems to hold here too. The question isn’t whether your articles are well-written. It’s whether your data is structured enough for an AI agent to act on it without needing clarification.

And if your entire SEO strategy is still built around getting humans to click blue links, that strategy probably has a shorter shelf life than anyone on your team is comfortable admitting.