Google Concedes AI Overviews and AI Mode Run on Separate Stacks

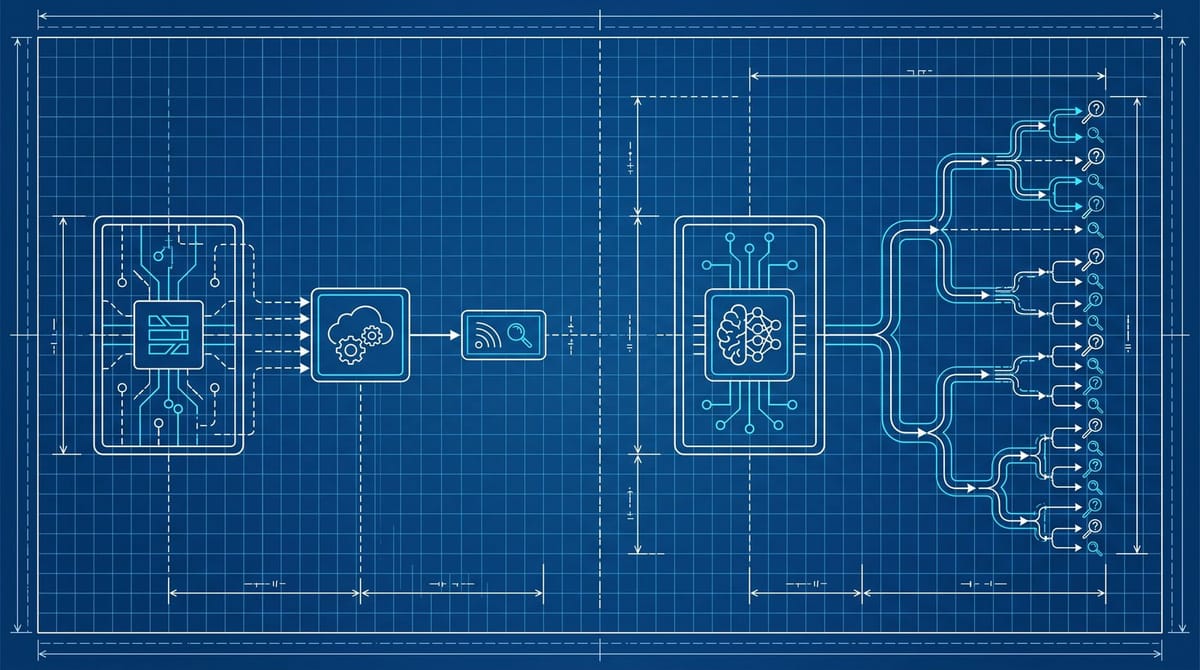

Google confirmed on May 4 that AI Overviews and AI Mode run on separate infrastructure. AI Overviews sits on top of the classic search index with a small fan-out and a summary layer. AI Mode runs on its own bigger platform, with longer queries, longer reasoning, and a much larger fan-out. A page that wins AIO citations is not guaranteed to surface in AI Mode, and a page strong in AI Mode is routinely missing from AIO.

The framing came out of the latest Search Off The Record podcast and was flagged by Search Engine Roundtable on May 4. It is the closest Google has come to admitting it now operates two SERP-equivalent surfaces with non-identical ranking. AI Overviews is described as a feature on top of the existing retrieval and ranking system, with a few fan-out queries layered in to assemble the summary. AI Mode is described as its own platform that uses search but runs on new infrastructure.

Two systems, two ranking floors

That distinction matters because of one Ahrefs result from February: only 38% of pages cited in AI Overviews still rank in the top 10 organic for the same query, down from 76% seven months earlier. The link between citation and ranking is decaying, and the systems aren't tightly coupled in the way most agencies are still pricing audits. If you're not running two separate visibility checks, you're paying for one signal and missing the other.

I think most teams will assume the two surfaces work the same way for at least another quarter. From what I've seen in our own tracking, that assumption is already costing visibility. Pages that cleared the top 10 a year ago and still rank there are showing up in AIO maybe 30% of the time and showing up in AI Mode under 15%, while a handful of newer pages with strong individual passages are getting cited in AI Mode without ever cracking the classic top 10. The two surfaces are picking different content, and the gap is widening.

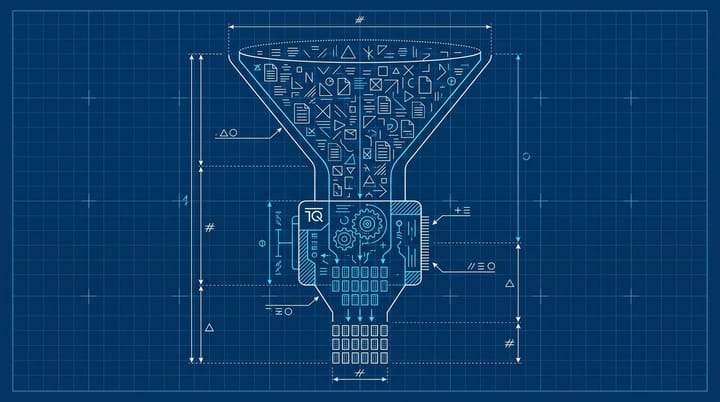

The fan-out is doing different work in each system

In AI Overviews, the fan-out is small. The LLM rewrites the query, fires a handful of related searches, and stitches the result into a one-paragraph summary above the blue links. Citations get pulled from the same retrieval set the classic SERP uses, which is why most AIO citations still come from pages already ranking somewhere on page one.

In AI Mode, the fan-out is the whole point. Digiday's explainer of the technique describes the system breaking a question into subtopics, firing simultaneous queries across each one, then ranking chunks (not whole pages) for inclusion in the synthesis layer. Google's own AI Mode update post notes that Deep Search, the long-form variant, can issue hundreds of queries in a single run.

The practical implication: AI Mode rewards passages that answer a sub-question completely on their own. AIO rewards pages that already rank in the top 10 and match the SERP's intent cluster. SE Ranking's fake-brand experiment last week showed AI Mode ranking a fabricated brand #1 for 90% of branded queries on the strength of well-structured passages alone. The chunking layer is more forgiving of new sites with strong individual sections than the classic ranking layer ever was. It is also less stable, which is the trade-off.

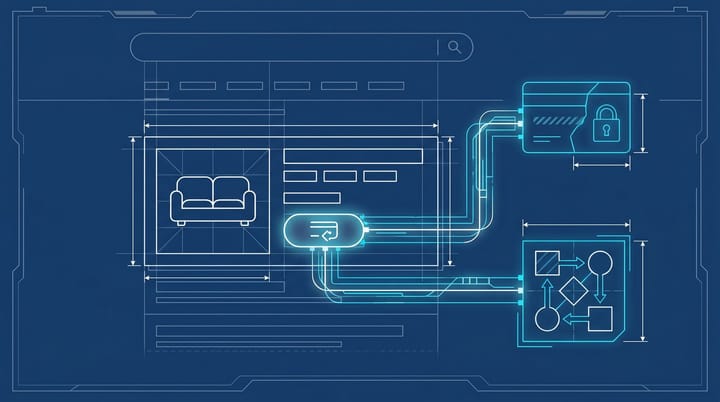

The measurement gap nobody is closing

Google still does not separate out AI Mode impressions or clicks in Search Console. AIO clicks are only partly broken out, and the AI citation share is a number Google has explicitly chosen not to expose, which is what made Bing's preview of the same metric earlier this month so embarrassing for Google.

What you actually have access to:

- Classic blue link clicks (visible in Search Console)

- AIO impressions and clicks (mostly visible, mostly underreported)

- AI Mode visibility (zero visibility unless you're paying a third-party tool)

The result is a measurement gap that hides which system is actually sending the click. Press Gazette's 2026 trends report put global publisher referral traffic from Google down roughly a third year over year, and ALM Corp's analysis of the antitrust filing pinned the AIO click decline at 58%. The worst case for an SEO team right now is celebrating a position-1 ranking that no longer pulls a click, with no way to tell if the AI Mode citation share is filling the gap or not.

A 30-minute audit to find the gap on your own pages

This week, pick five of your highest-value money pages. Then:

- Run each query in classic Google Search and screenshot the AI Overview. Record whether your page is cited and where.

- Run the same query in AI Mode (the dedicated tab, not the AIO box). Record whether your page is cited and the position of the citation in the response.

- For queries where AIO cites you but AI Mode doesn't, mark the page for a passage rewrite. Pull the most-cited paragraph from AIO and rewrite the rest of the page so each H2 section can stand alone as a complete answer.

- For queries where AI Mode cites you but AIO doesn't, check classic ranking. If you're outside the top 10, AIO will not pick you up. The AI Mode citation is your only inbound from Google for that query.

- For queries where neither surface cites you, that page is dark to Google's AI layer entirely. Either rewrite it to passage-led structure or accept the page is now classic-SERP-only.

Benchmark to aim for: AIO citation rate above 30% of money-page queries, AI Mode citation rate above 50%. Anything under 20% on either surface is the page losing visibility, not Google's coverage gap.

One caveat. AI Mode rankings shift faster than classic ranking, so a single audit is a snapshot, not a baseline. I'd rerun the same five queries weekly for a month before drawing conclusions about which pages are actually trending in AI Mode and which ones got lucky on the day you ran the test.

The optimization split is probably permanent

Google framed this on the podcast as a temporary infrastructure split: AI Mode is new, runs on a bigger platform, and the implication was that things might converge later. I don't buy it. Building a fan-out engine on top of the existing index is a much harder engineering problem than running a parallel system, and there is no obvious business reason for Google to merge the two when the AI Mode interface keeps more of the click inside the Google walled garden anyway. Plan for two ranking systems for the rest of 2026.

The strategy that survives both surfaces is the boring one. Pages that win citations across both systems are pages where every H2 section is a complete, self-contained answer with a verifiable source, written for a real practitioner question rather than a keyword. Everything else is going to be one or the other, and the surface you're optimizing for stops being a single SERP and starts being a portfolio decision.

If I'm picking one to over-index on right now, it's AI Mode. The chunking-led ranking is more forgiving for newer pages, the citation share is growing faster than AIO's, and the measurement gap means almost nobody on your competitor side is auditing it yet. That last part is the cheapest edge in SEO this year.

Notice Me Senpai Editorial