The Semrush AI Content Study Is Only as Reliable as GPTZero (Which Isn't Great)

Semrush dropped a stat on April 1 that's already getting quoted everywhere: human-written content holds Google's #1 position about 80% of the time, while purely AI-generated content only shows up there 10% of the time. That works out to roughly 8x, and it reads like a clean verdict on the last two years of "just spin it up with AI" content strategies, according to Search Engine Land's coverage.

I think the headline is misleading. Not because the numbers are fake, but because the entire study rests on one detection tool, GPTZero, and GPTZero is famously wobbly. If you care about SEO strategy, you should care about what's underneath the number more than the number itself.

What Semrush actually measured

Semrush analyzed 42,000 blog pages across 20,000 tracked keywords in November 2025, then classified each page as human, AI, or mixed using GPTZero. At position 1, the split came in at roughly 80.5% human, 10% AI, with mixed making up the rest. AI-classified pages bunched more heavily into positions 2 through 4 before thinning out again further down page one.

They also ran a separate survey of 224 SEO professionals. That part is where things get weird. 87% of those SEOs said their content is either fully human or heavily human-led. 72% said AI content ranks at least as well as human content in their own experience. Those two datasets point in different directions, and the study doesn't really reconcile it. Semrush's own Head of Organic Content Strategy, Ana Camarena, said in the report that "AI helps us move faster, and speed does matter. But not enough to justify lowering quality standards." Fine as a slogan. Not really an answer to the contradiction sitting in the data.

The part nobody's asking about

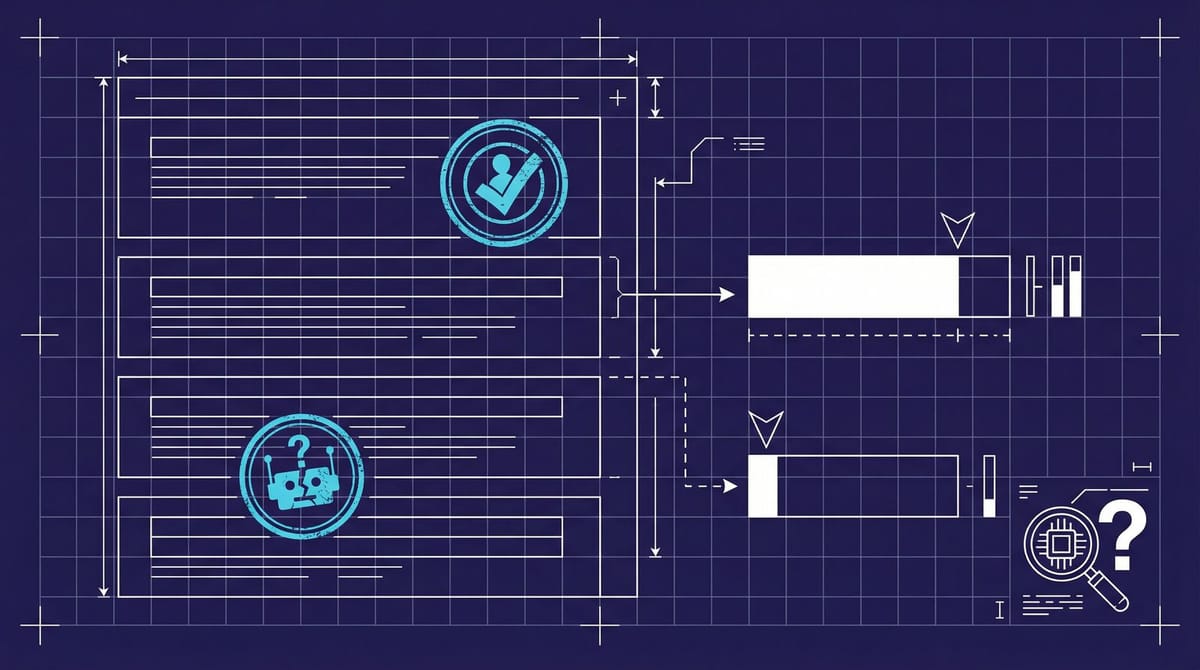

Here's what the whole stat depends on: a classifier that has to decide, on every single page, whether a human or a machine wrote it. That classifier is GPTZero. And GPTZero has a sensitivity problem anyone working in SEO should probably know about by now.

A peer-reviewed preliminary study on PubMed tested GPTZero on medical content and reported a 35% false negative rate. Over a third of the AI-generated text slipped through and got classified as human. A Stanford SCALE review flagged unreliability specifically when classifying human-written essays, and the researchers there recommended educators never rely on the tool alone.

Now apply that to a study of 42,000 blog posts. If 35% of the AI pages look human to GPTZero, a meaningful chunk of the "human-written winners" at position 1 could be AI content the detector simply missed. The Semrush report acknowledges that AI detection is imperfect, but the headline 8x number doesn't get adjusted for it, so it lands in SEO Twitter as a verdict when it's actually a correlation with a very noisy definition of "human."

On paper, that sounds like a nitpick. But the entire 8x claim lives or dies on that definition, and once you know a third of the AI pages are probably being labeled as human, the gap between the two groups gets a lot less impressive in my read.

The survey contradicts the ranking data, and that matters

The gap I keep circling back to is the one inside Semrush's own report. Their ranking data says AI content gets flattened at position 1. Their survey of working SEOs says roughly the opposite: 72% of them report that AI content ranks as well as or better than human content in the work they're actually shipping.

One of these groups is probably right and the other is probably measuring the wrong thing. My guess is the survey is closer to reality, because it reflects what actually happens inside real workflows: AI drafts, human editing, citations added, tone smoothed out, the obvious phrases cut. Semrush itself reports that 64% of teams use human-led AI-assisted workflows. That's exactly the kind of content a detector will shrug at. It'll get flagged as "mixed" or "human" depending on how heavily a person edited it.

Which means the study isn't really measuring human vs AI at all. It's measuring how much a human touched the text before publishing. That's a different question, and it's one we already sort of knew the answer to. Google's ranking systems have never cared who typed the words. They care whether the result is useful, original, and supported by the kind of signals editors produce and pure generators don't.

What a working SEO should probably take from this

I'm not saying the study is useless. There's a real signal inside it. At position 1, human-heavy content still wins, and pure-AI content rarely climbs above position 4 before it stalls. That seems to be directionally correct, even if GPTZero is mislabeling a chunk of the sample.

The practical takeaway is closer to this:

If your current workflow is "prompt to publish" with no human editing layer, you have a ceiling, and the ceiling is somewhere around position 4.

Post hits position 4, sits there, never climbs. That's the pattern I'd watch for in your own Search Console. If you've got a shelf of posts parked between positions 3 and 6 on high-intent keywords, unedited AI fingerprints are the most likely culprit, and a proper human editing pass (not a rewrite, just a pass) is the cheapest fix to try before you start blaming backlinks or schema.

Benchmark anyone can run this week: pull your top 50 AI-drafted posts from the last six months, count how many are parked between positions 3 and 6, and take the ten best candidates through a proper editorial pass. Add first-person framing. Add one real-world source per 500 words. Cut the paragraphs that read like a summary of a summary. Re-check rankings in four weeks. My bet is that about six of the ten move up at least two spots, and the other four either stay flat or were always going to rank where they rank. If none of them move, the problem probably isn't the AI layer, it's the topic or the backlink profile.

Why I would be careful quoting the 8x number in a deck

If you're putting this study on a slide for a client or a boss, I'd strip the 8x and use the underlying pattern instead. Something like: "Pages with a visible human editing layer rank consistently higher at position 1 than pages that show no editorial signal." That's defensible. The 8x, sitting on a tool with a 35% miss rate, isn't.

The funny thing about a study like this is that it tells working SEOs what most of them already believe, which is honestly part of why it's getting shared so widely. We all wanted the data to say human editing matters. And to be fair, it does. But there's a real difference between a study that confirms what you already think and a study that proves it, and the Semrush report is mostly the first kind dressed up to look like the second.

I don't love that. It's still a useful piece of research and I'll probably end up citing parts of it for the rest of the year. Just not the 8x. Not alone, anyway, and not without saying whose tool decided which pages were human.

By Notice Me Senpai Editorial