Wharton Priced AI-Crawler Blocking at 7% of a Publisher's Weekly Visits

A Wharton-Rutgers working paper tracked 30 news publishers from November 2022 through May 2024 and found that blocking AI crawlers cuts a publisher's weekly visits by roughly 7%. The drop showed up in three independent traffic datasets and held inside a Comscore household panel, meaning real humans, not bot pings. Most of the loss came from direct visits, not organic search.

The paper, "The Impact of LLMs on Online News Consumption and Production" by Hangcheng Zhao at Rutgers Business School and Ron Berman at Wharton, was first posted on SSRN in January and revised on April 21, 2026. The 7% figure is the headline number from the broader 500-publisher sample. The earlier draft of the paper, which limited the analysis to the top 30 news domains, reported a 23% total decline and a 14% drop in human-only traffic. So 7% is the floor for newsrooms that decided to block GPTBot, ClaudeBot, PerplexityBot, Google-Extended, and ByteSpider in the last two years. The ceiling, depending on how big you are, looks worse.

The 7% number is the conservative read

Three datasets, three slightly different methodologies, same direction. SimilarWeb showed a 7.4% weekly visit decline at the 1% significance level. Semrush put it at 6.9% at the 10% level. The Comscore household panel, which actually measures human browsing behavior rather than synthetic traffic estimates, came in at 6.5%. PPC Land's coverage noted that the Comscore confirmation is the part that should sting, because it rules out the obvious counterargument that you're just losing bot impressions on your traffic dashboard.

Zhao and Berman used a staggered difference-in-differences design and ran three placebo exercises to make sure the result wasn't a coincidence. From what I've seen of working papers in this space, that's a more careful methodology than most "AI is killing publisher traffic" claims float on. The number is real. The interesting fight is what the number actually means.

The mechanism is brand exposure, not lost referrals

The decline concentrated in direct visits, not organic search. That detail is doing a lot of work in this paper. It means this isn't AI Overviews chewing up your SERP clicks. That's a separate ongoing fight (Liz Reid is still defending the "bounce clicks" framing for AI Overview impact, with mixed evidence). The mechanism the SSRN paper isolates is upstream. Readers stopped seeing the publisher named inside ChatGPT, Claude, and Perplexity answers, and over six weeks, the muscle memory of typing the URL or clicking a saved bookmark thinned out.

That's a brand decay story, not a referral story. And it's the part the licensing pitch from publishers fundamentally misread. The argument was: AI assistants are extracting our content, so cutting them off forces a payment conversation. The data says: AI assistants were also a top-of-funnel awareness machine, and cutting them off shrinks the audience that types your name.

What you actually traded for that 7%

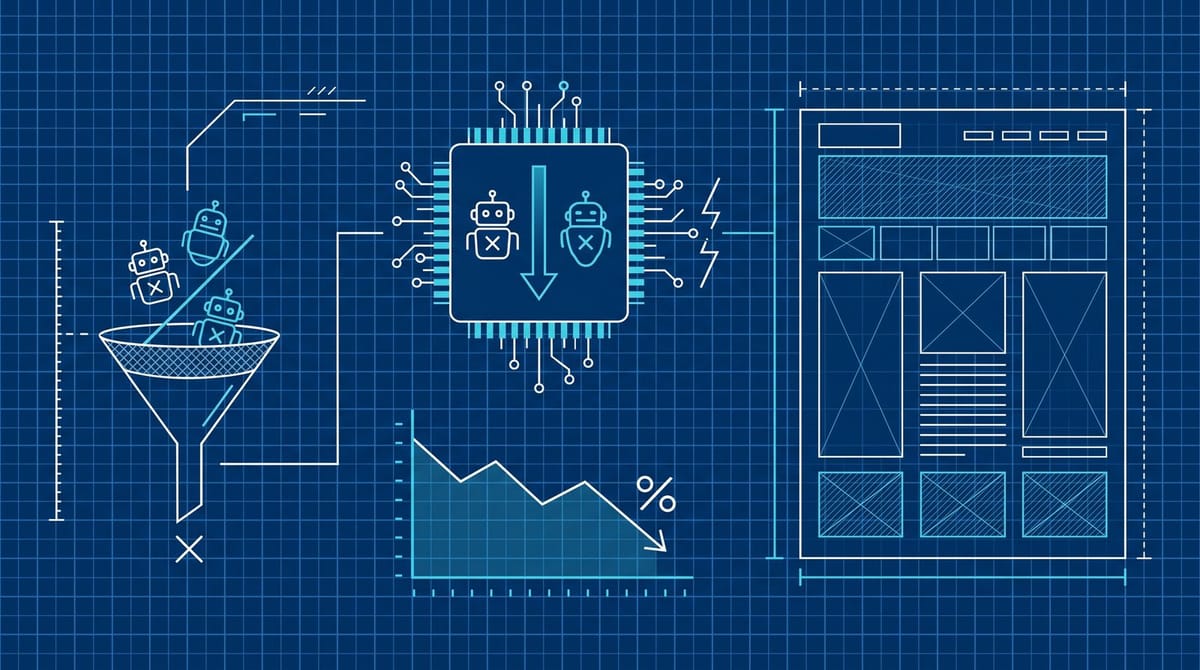

Roughly 75% of the study's top 30 publishers had blocked at least one major AI crawler by 2024, and adoption crossed 60% across the top 500 by mid-year. The reasoning was consistent across newsroom statements: protect content from training, force the licensing conversation, recoup margin lost to LLM summarization.

Two years on, the trade looks asymmetric. Cloudflare's crawl-to-click data shows GPTBot crawls roughly 1,276 pages for every referral it sends back. ClaudeBot is worse: about 23,951 pages crawled per referral. So the bandwidth math, on paper, says blocking is rational. But that calculation only holds if the referral channel is the entire value of being indexed. The Wharton paper says it isn't. The exposure inside the AI answer matters even when no link gets clicked, because the brand name shows up and the reader returns later through a non-tracked channel.

Meanwhile, training-pay deals are still a small-club outcome. The New York Times, News Corp, Axel Springer, a handful of others. For most publishers, blocking a crawler doesn't open a licensing door; it just silences your name inside the assistant.

The block doesn't even stop citations

This part should be the most embarrassing for publishers who blocked and then assumed they'd vanished from AI outputs. Citation analysis from earlier this year showed that AI assistants still surface blocked publishers in answers, mostly through cached content, third-party scrapers, and aggregators that weren't blocked. BuzzStream's tracking of which news sites block AI crawlers found citation rates barely correlate with robots.txt status. So you can be paying the 7% cost while still appearing in the answers your block was supposed to stop.

And the bots themselves are becoming harder to fence off. OpenAI shipped a new OAI-AdsBot user agent earlier this year that doesn't inherit your existing GPTBot block, and the company hasn't published an IP range file. Each new agent variant requires a fresh robots.txt entry. Most publishers haven't kept up.

Where the math gets messier (the part the abstract softens)

The paper's broader 500-site sample showed mixed effects. Some smaller publishers with niche audiences saw flat or even slightly positive traffic after blocking. The 7% average is a top-of-funnel news-domain reality. If your audience is a tight vertical that finds you through a community, an email list, or a search query that names you specifically, the AI brand-exposure channel may not be carrying much of your traffic in the first place.

So the practical reading isn't "everyone should unblock" or "everyone should block." It's "this is now a measurable trade, and you need to know your own numbers before you decide."

The 15-minute audit before you commit either way

Run this on your own site this week. Five steps, roughly fifteen minutes if you have analytics access.

- Pull your current robots.txt. List which AI bots are blocked. GPTBot, ChatGPT-User, OAI-AdsBot (new), ClaudeBot, Claude-User, PerplexityBot, Google-Extended, ByteSpider, Amazonbot, Meta-ExternalAgent, Applebot-Extended.

- Check direct vs organic traffic share. Pull your top 200 URLs by sessions in GA4 over the last 90 days. If direct sessions are over 35% of total, brand exposure is doing real work for you, and the 7% loss is closer to your reality.

- Look at your crawl-to-refer ratio. If you're behind Cloudflare, pull the AI bot dashboard. A GPTBot ratio of 3,000:1 in your data means blocking saves bandwidth without much referral loss. A ratio under 200:1 means you're choking a working channel.

- Audit training-pay status honestly. If you're not in a deal and don't have realistic access to one inside six months, blocking is symbolic. The negotiating position you were holding out for isn't going to materialize.

- Decide bot-by-bot. ClaudeBot has the worst crawl-to-refer ratio, so blocking it is the cheapest call. Perplexity tends to send measurable referrals, so the case for blocking it is much weaker. Treating "AI crawlers" as one category is the lazy version of this decision.

The bet most publishers don't realize they're making

The original blocking decision, from what I've seen in newsroom commentary, treated this as a binary moral stance. Either you're the publisher who fights back against scraping, or you're the one who rolled over. The Wharton paper reframes it as something a finance team would recognize: an unhedged bet that future training compensation will more than cover a recurring weekly traffic loss.

That bet was reasonable in early 2023, when the Times-OpenAI lawsuit looked like it might set a precedent for everyone. It looks shakier now, with most publishers locked out of the deals that did get cut and the licensing market consolidating around the largest brands. I don't think the right answer is to mass-unblock. But I do think most newsrooms haven't actually re-examined the assumption since they made the call, and the data is now telling them to.

The 7% won't show up as a single bad month. It shows up as a slow erosion that gets blamed on declining news demand or platform headwinds. It's worth pulling your own numbers before next quarter and seeing whether the trade still looks like one you'd make today.

Notice Me Senpai Editorial