Wikipedia Drives 13% of ChatGPT Citations (Most Brand Audits Skip It)

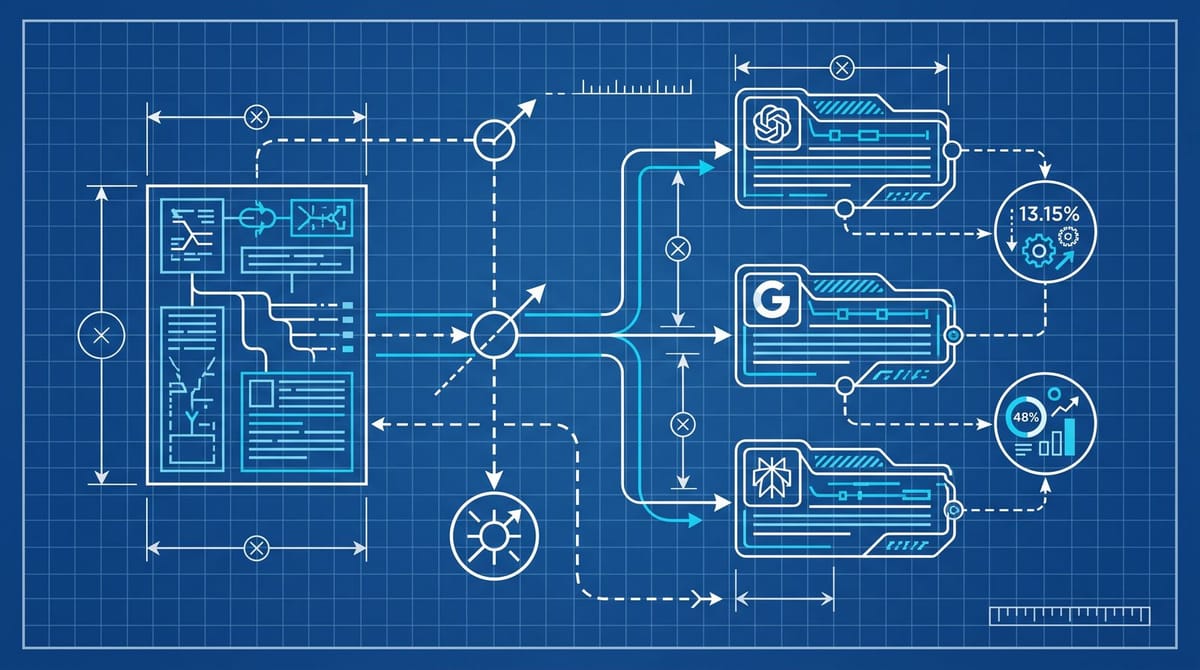

Wikipedia supplies roughly 13.15% of ChatGPT citations in the US, and 26% to 48% of the top-10 citation share, according to the 5W AI Platform Citation Source Index 2026. A single outdated paragraph about a brand can then propagate into AI Overviews, Perplexity, and Claude for years. Most reputation audits still start with Google SERP suppression, not a Wikipedia talk page request.

Why Wikipedia is now upstream of every brand audit

ChatGPT pulls about 13.15% of its citations from Wikipedia and 11.97% from Reddit, per the 5W Citation Source Audit Q1 2026, which folds in nine datasets and more than 680 million citations. Separate reads put Wikipedia at 26% to 48% of the top-10 citation share inside ChatGPT specifically.

That's the part that quietly broke the old reputation playbook. SERP suppression assumes Google rankings are the bottleneck. They aren't, not anymore. Anthony Will at Reputation Resolutions made the point in Search Engine Land: AI systems treat verified Wikipedia paragraphs as load-bearing facts, and roughly 40% of users don't fact-check AI search results. So a controversy paragraph from 2017 that survived the Wikipedia edit cycle is being recited inside ChatGPT in 2026, even if your last decade of press coverage tells a different story.

This isn't a separate problem from SEO. It's the same problem one layer up. The page deciding your AI answer sits upstream of the SERP, not on it.

The verifiability rule is what makes the citation permanent

The reason a Wikipedia paragraph sticks even when the underlying reality changes is structural. Wikipedia ranks edits on verifiability, not truth, and it requires reliable secondary sourcing. Once a critical claim is footnoted to a real news source, it tends to stay until someone adds equally strong sourcing that contradicts it. That's the default posture of the editing community, not a bug.

This is where most brand teams pick the wrong move. They escalate to "delete the Wikipedia mention." Wikipedia editors decline that almost every time, and probably correctly. The move that works is adding sourced positive context next to the existing claim, not removing it. Slower, more annoying, harder to brief into an agency than "buy backlinks." But it's the one Wikipedia editors will actually accept.

And in 2026 it matters more than it used to. The Bureau of Investigative Journalism's January 2026 reporting on Portland Communications surfaced what some firms have apparently been doing for a decade: routing edits through undeclared subcontractors to bypass conflict-of-interest disclosure. The penalty when Wikipedia catches you is brutal. Edits reverted, accounts banned, the article tagged for community review, sometimes a public talk-page log of every COI edit ever attempted. Reddit and trade press pick that up next. So the do-it-yourself shortcut now carries real downside.

What a Wikipedia reputation audit actually looks like

I'd separate this into a real audit, not the marketing-deck version. Three components, and the order matters.

Article inventory. Pull every Wikipedia article that mentions your brand or its executives. Don't just look at your own article. The damaging mention is usually inside an industry-overview page, a competitor's page, or a "criticism of X industry" article. List the URL and quote the specific paragraph verbatim.

Citation review. For each negative or stale paragraph, click through to the cited source. Is the source still up? Still saying what Wikipedia summarizes it to say? Has the underlying claim been retracted, resolved, or updated by the source itself? Wikipedia editors care a lot about this. A retracted news article is a legitimate reason to revise the paragraph, and it's the kind of evidence the community will accept from someone with a COI.

Edit-history scan. Open the article history and read the last 24 months of edits to your paragraph. Was it edited by the same handful of accounts? Has the talk page been used? This tells you whether you're dealing with an active editor community or a sleeper paragraph nobody's touched in years. The two situations need different tactics.

The audit is maybe a half-day for a single brand. Most agencies skip the citation review step entirely, which is the one that actually unlocks edits, because it's the only path Wikipedia editors find legitimate.

The talk page move that beats SERP suppression on cost

For most brands, the right intervention is the smallest one: a documented edit request on the article's talk page, with the sourced replacement language and the COI declaration visible. Statuslabs has a useful piece on why AI models treat Wikipedia as a truth anchor that's worth reading before you draft one, because it changes the framing of the request from "please remove" to "please correct the downstream effects."

The structure that tends to get approved:

- Open with the COI disclosure. The editor will check anyway.

- State the specific sentence you want changed and quote it verbatim.

- Propose the new sentence.

- Attach two to three independent, reliable secondary sources that support the new sentence. Trade press counts. Self-published statements don't.

- Don't argue. Don't escalate. If the first editor declines, the next one might not.

The cost of one of these is a few hours of writing and source-gathering, maybe a back-and-forth across 7 to 14 days. Compare that to a quarter-long SERP suppression campaign, which in 2026 barely touches the AI surface anyway. The ROI math isn't really close. From what I've seen, the talk page approach lands maybe 40 to 60% of the time on the first request, and noticeably higher when the citation review surfaced a genuinely retracted source.

There's a wrinkle worth knowing. Five Blocks reported that ChatGPT also pulls from hidden Wikipedia drafts and deleted-article history, not just published articles. So a draft that got rejected for notability might still be feeding the model. If your brand has a draft that died on a deletion debate, that draft text is probably still ingested somewhere. There's no clean fix for that yet, but it's worth knowing what's in your own deletion log before someone else surfaces it.

Where this fits next to the rest of the AEO work

The AEO playbook most agencies sold for 2025 was schema markup. Ahrefs's 1,885-page schema study made that pitch a lot harder to defend. Schema gave roughly zero lift on AI citations. The opportunity isn't on your own site. It's on the third-party sources AI is citing, and Wikipedia is the largest single one for the average brand.

Practically: budget about 60% of your AEO work toward Wikipedia, Reddit, and the named industry trade press that AI actually pulls from. Put 20% toward your own content's structural accuracy, the kind of thing that holds up if AI does happen to cite you. Leave 20% for citation analytics work, because the source mix shifts every quarter and last year's list probably won't match this year's.

And do the citation review before anything else. If you can't show a Wikipedia editor a retracted or revised source, the edit request is going to bounce, and you'll have burned the credibility you need next time.

What one approved Wikipedia edit actually buys you

The reputation discount from a single approved edit isn't huge on its own. It's one paragraph in one article. But the AI surface compounds it. ChatGPT, Perplexity, AI Overviews, Gemini, and Claude pull from Wikipedia at meaningfully different rates, but the directionality is the same across the board. Improve the upstream, every downstream answer shifts a little.

The brands I've watched do this well don't treat the Wikipedia edit as a one-off. They run it on a quarterly cycle: audit, review, request, recheck the AI surface six weeks later, log what changed. Pure SERP suppression in 2026 is auditing a layer that fewer and fewer people actually see. The Wikipedia layer is the one ChatGPT is reading aloud.

Notice Me Senpai Editorial