Hershey's $450M Media Team Was Reading 2024 Data Halfway Through 2025

The Hershey Company partnered with Mutinex and Tracer to compress its marketing mix modeling cycle from months to three weeks using agentic AI. Hershey's VP of media and marketing technology, Vinny Rinaldi, told Adweek the team got its 2024 spend read midway through 2025, while planning for 2026 was already underway. The expected 4-5% lift on $2 billion in combined media and trade spend is what funds the rebuild.

That timeline is the part the press release wants buried. Forget the AI agents headline for a second. A Fortune 500 CPG with a $450M media team and roughly $2B across media plus trade was making 2026 budget calls based on a partial read of 2024. Hershey isn't a laggard. They're the median. Most of the brands you advise are doing the exact same thing, just with smaller numbers.

I think the most useful thing to take from the Adweek piece is not the partner stack. It's the diagnostic. If you can sketch the gap between when your money got spent and when you got to read about it, you've already done step one of the audit Hershey just paid millions to formalize.

The confession is in the timeline, not the AI

"We were getting the full read of 2024 [data] midway through 2025, while we were planning for 2026," Rinaldi told Adweek. That's roughly 18 months from spend to insight to next-year planning input. In a category where seasonal shelf positioning, retailer trade dollars, and Halloween/Easter spikes drive most of the revenue swing, that lag means most decisions inside the year are running on instinct, last year's holdouts, and whatever the agency's quarterly slide deck says.

Hershey ran MMM about three times a year on roughly five brands, per Adweek. Everything else, the rest of the portfolio and the rest of the calendar, was unmeasured impact. You don't call $2 billion a "blind spot" if you're seeing most of it.

The lesson, though: lag is the cost. Not the consultant invoice. The cost is decisions you would have made differently if you'd known sooner.

What an MMM "agent" actually does

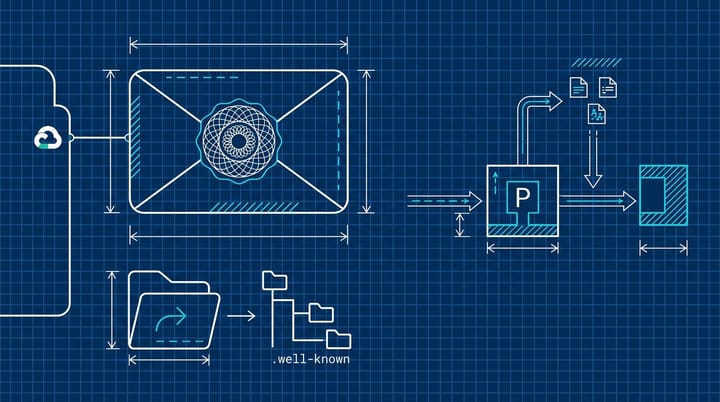

Mutinex describes its GrowthOS platform as a multi-agent system where individual agents specialize in econometrics, competitive pricing theory, and model diagnostics. Translation: each step that used to be one Bayesian statistician hand-tuning priors is now a model running a structured task with a defined output, and a coordinator agent stitches them together. Underpinned by Claude and Gemini, per Adweek.

Tracer is the unglamorous half. Sarah Martinez, Tracer's chief commercial officer, told Adweek "most companies don't have an AI problem. They have a data readiness problem." That's where the time savings actually come from. The reason traditional MMM took four months wasn't the math. It was waiting on retailers, agencies, syndicated panels, and finance to all reconcile to the same week ending and the same brand hierarchy. Automating the data layer is what lets the model layer run in three weeks.

If you're a smaller brand looking at this stack and thinking "that's not for me," fair. Most teams reading this don't have $2B in spend or a partner roster that includes Mutinex. The pattern still applies. Tracer-style cleanup is solvable with a junior analyst, a shared schema, and two weeks of pain. The agent layer is where the moat sits, and that part is genuinely new.

If you're not Hershey, do the lag audit yourself

The instruction Rinaldi keeps repeating, in the Adweek piece and on Hershey's own newsroom, is to flip cadence over coverage: more brands, more often, with worse fidelity, beats five brands deeply once a quarter.

If you're running a $5M to $50M media program, the equivalent audit is a half-day spreadsheet exercise:

- List every channel you spent against in the last 12 months, with monthly spend by channel.

- For each channel, write the date you got the last attributed-revenue or incrementality read on it. Not an "engagement" read. Revenue or test-confirmed lift.

- Subtract column 2 from column 1. That's your lag in months.

Anything over 90 days of lag on a top-3 spend channel is a Hershey-sized blind spot scaled down. Most teams I've watched run this find at least one channel where the answer is "we haven't formally measured incrementality on this in over a year." Usually it's PMax or a Meta CBO campaign that "performs well" by platform-reported numbers nobody has cross-checked against MMM or holdouts.

OptiMine's writeup on cadence has been making this argument for a while: models can degrade 10-35% in accuracy between refreshes if you stretch the gap, especially in volatile categories. CPG, retail, and ecom all qualify. Whatever your last MMM said about Q4 was already drifting by February.

Monthly is a cadence, not an answer

The risk in the Hershey announcement, and I think this gets papered over, is treating "monthly MMM" as if it's automatically more accurate than quarterly MMM. It's not. Faster refresh on a poorly specified model just means you're wrong faster.

Mutinex's own writing on MMM challenges flags multicollinearity, noisy data, and overfitting as the real failure modes. Running models monthly is a bigger problem if you don't hold out a clean test window every refresh. The OptiMine piece linked above makes the same point: if your out-of-sample error gets worse when you go from quarterly to monthly, you're overfitting to noise and the cadence is too tight.

So the Hershey playbook isn't "run MMM 12 times a year." It's "build the data infrastructure first, automate the model coordination, then push cadence as far as your validation curve will support."

The 4-5% lift number is also worth squinting at. That's expected, not realized. Hershey is rolling this out, not reporting on completed quarters with the new system. If you're in a pitch room and a vendor cites that figure, ask which fiscal quarter the 4-5% has actually shown up in. In CPG, "expected lift" tends to live forever in deck appendices.

The one-page memo your CMO will read

If you sit on a measurement or marketing analytics team, the practical follow-up is short. Run the lag audit above. Then write a one-page memo to your CMO with three numbers: average days from spend to attributed read by channel, the share of total spend running with a lag over 90 days, and the marginal cost of cutting that lag in half (usually a data engineer, not a vendor). That memo is the conversation Rinaldi has been having internally at Hershey for two years. You can have it next week.

For agency teams, expect the inbound shift: clients pulling you into their cadence rather than the other way around. We covered something similar in MiQ's finding that only 43% of marketers trust their own measurement, and the pattern lines up. Brands fixing measurement first are pulling agency oversight closer, not pushing it out.

A skeptic's read on the rollout

I'm not sure Hershey's stack is the one most other CPGs will adopt. Mutinex is small, the Claude-plus-Gemini pairing is unusual, and Tracer is replacing infrastructure decisions a lot of holding companies already locked into Snowflake or Databricks. The story is less about who Hershey picked and more about what they admitted: a brand this size, with this much spend, was operating on a measurement timeline that would embarrass a Series B SaaS company.

The version of this story that lands in six months is whether the 4-5% lift shows up in actual quarterly disclosures or quietly drifts to "embedded in baseline." From what I've seen with similar enterprise rollouts, that line tends to land somewhere between honest reporting and convenient burial. Worth flagging the Q3 2026 earnings call when it shows up on the calendar.

By Notice Me Senpai Editorial